📌 MAROKO133 Hot ai: Adobe Research Unlocking Long-Term Memory in Video World Model

Video world models, which predict future frames conditioned on actions, hold immense promise for artificial intelligence, enabling agents to plan and reason in dynamic environments. Recent advancements, particularly with video diffusion models, have shown impressive capabilities in generating realistic future sequences. However, a significant bottleneck remains: maintaining long-term memory. Current models struggle to remember events and states from far in the past due to the high computational cost associated with processing extended sequences using traditional attention layers. This limits their ability to perform complex tasks requiring sustained understanding of a scene.

A new paper, “Long-Context State-Space Video World Models” by researchers from Stanford University, Princeton University, and Adobe Research, proposes an innovative solution to this challenge. They introduce a novel architecture that leverages State-Space Models (SSMs) to extend temporal memory without sacrificing computational efficiency.

The core problem lies in the quadratic computational complexity of attention mechanisms with respect to sequence length. As the video context grows, the resources required for attention layers explode, making long-term memory impractical for real-world applications. This means that after a certain number of frames, the model effectively “forgets” earlier events, hindering its performance on tasks that demand long-range coherence or reasoning over extended periods.

The authors’ key insight is to leverage the inherent strengths of State-Space Models (SSMs) for causal sequence modeling. Unlike previous attempts that retrofitted SSMs for non-causal vision tasks, this work fully exploits their advantages in processing sequences efficiently.

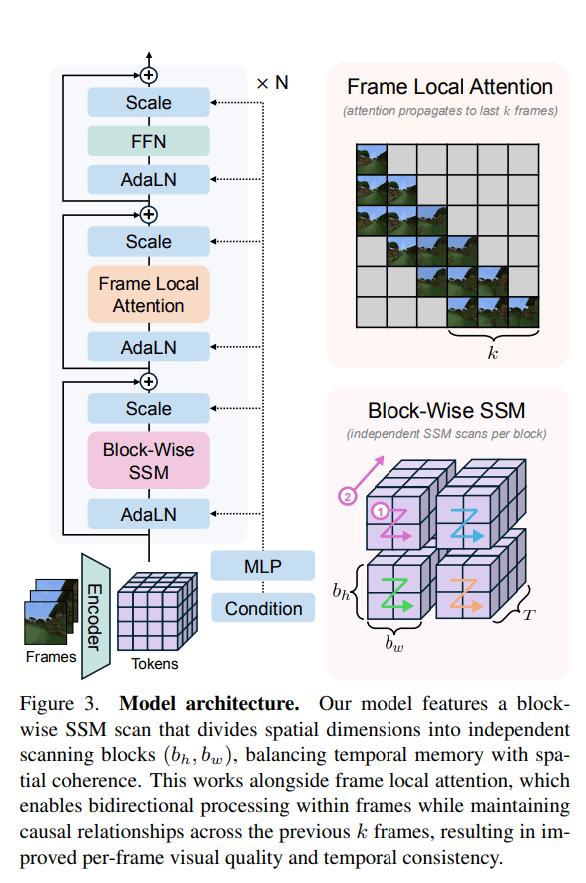

The proposed Long-Context State-Space Video World Model (LSSVWM) incorporates several crucial design choices:

- Block-wise SSM Scanning Scheme: This is central to their design. Instead of processing the entire video sequence with a single SSM scan, they employ a block-wise scheme. This strategically trades off some spatial consistency (within a block) for significantly extended temporal memory. By breaking down the long sequence into manageable blocks, they can maintain a compressed “state” that carries information across blocks, effectively extending the model’s memory horizon.

- Dense Local Attention: To compensate for the potential loss of spatial coherence introduced by the block-wise SSM scanning, the model incorporates dense local attention. This ensures that consecutive frames within and across blocks maintain strong relationships, preserving the fine-grained details and consistency necessary for realistic video generation. This dual approach of global (SSM) and local (attention) processing allows them to achieve both long-term memory and local fidelity.

The paper also introduces two key training strategies to further improve long-context performance:

- Diffusion Forcing: This technique encourages the model to generate frames conditioned on a prefix of the input, effectively forcing it to learn to maintain consistency over longer durations. By sometimes not sampling a prefix and keeping all tokens noised, the training becomes equivalent to diffusion forcing, which is highlighted as a special case of long-context training where the prefix length is zero. This pushes the model to generate coherent sequences even from minimal initial context.

- Frame Local Attention: For faster training and sampling, the authors implemented a “frame local attention” mechanism. This utilizes FlexAttention to achieve significant speedups compared to a fully causal mask. By grouping frames into chunks (e.g., chunks of 5 with a frame window size of 10), frames within a chunk maintain bidirectionality while also attending to frames in the previous chunk. This allows for an effective receptive field while optimizing computational load.

The researchers evaluated their LSSVWM on challenging datasets, including Memory Maze and Minecraft, which are specifically designed to test long-term memory capabilities through spatial retrieval and reasoning tasks.

The experiments demonstrate that their approach substantially surpasses baselines in preserving long-range memory. Qualitative results, as shown in supplementary figures (e.g., S1, S2, S3), illustrate that LSSVWM can generate more coherent and accurate sequences over extended periods compared to models relying solely on causal attention or even Mamba2 without frame local attention. For instance, on reasoning tasks for the maze dataset, their model maintains better consistency and accuracy over long horizons. Similarly, for retrieval tasks, LSSVWM shows improved ability to recall and utilize information from distant past frames. Crucially, these improvements are achieved while maintaining practical inference speeds, making the models suitable for interactive applications.

The Paper Long-Context State-Space Video World Models is on arXiv

The post Adobe Research Unlocking Long-Term Memory in Video World Models with State-Space Models first appeared on Synced.

🔗 Sumber: syncedreview.com

📌 MAROKO133 Eksklusif ai: OpenAI’s New Data Centers Will Draw More Power Than the

Earlier this week, OpenAI announced a “strategic partnership” with AI chipmaker Nvidia in which the duo of tech giants will build and deploy upwards of 10 gigawatts of AI data centers.

Nvidia will invest up to $100 billion in the project, an enormous project that could end up requiring an astronomical amount of electricity to run.

As Fortune reports, the planned data centers would consume as much as the entire city of New York City — and the Sam Altman-led company isn’t stopping there. Existing projects tied to president Donald Trump’s Stargate initiative could add another seven gigawatts, or roughly as much as San Diego used during last year’s devastating heat wave.

“Ten gigawatts is more than the peak power demand in Switzerland or Portugal,” Cornell University energy-systems engineering professor Fengqi You told Fortune. “Seventeen gigawatts is like powering both countries together.”

OpenAI and tech giant Oracle already have an enormous Stargate data center in Abilene, Texas, which draws enough electricity to power half a million homes. Five new projects are expected to total seven gigawatts, as part of Trump’s half-a-trillion-dollar AI data center initiative.

It’s an almost unfathomable escalation in the power usage of AI — and computing as a whole.

“It’s scary because… now [computing] could be 10 percent or 12 percent of the world’s power by 2030,” University of Chicago professor of computer science Andrew Chien told Fortune. “We’re coming to some seminal moments for how we think about AI and its impact on society.”

To the AI industry, it’s all part of the plan.

“Everything starts with compute,” Altman said in a statement accompanying its Nvidia partnership announcement. “Compute infrastructure will be the basis for the economy of the future, and we will utilize what we’re building with NVIDIA to both create new AI breakthroughs and empower people and businesses with them at scale.”

The industry’s doubling down on building out AI infrastructure has been accompanied by major environmental concerns, with tech giants admitting that they’re falling far short of their own carbon emission goals.

While the tech’s exact carbon footprint remains elusive, AI data centers are putting a major strain on local water supplies to keep hardware cool.

Additional pressure on power grids will also lead to a rise in carbon dioxide emissions, unless the AI industry finds a way to pivot to renewable energy sources in a meaningful way. You told Fortune that it may eventually become inevitable for companies to switch to nuclear plants, which could “take years to permit and build.”

“In the short term, they’ll have to rely on renewables, natural gas, and maybe retrofitting older plants,” he added.

As companies continue to pour tens of billions of dollars into infrastructure buildouts, the tech’s carbon footprint is expected to grow.

It’s an unfortunate reality that the industry will have to reckon with one way or the other, especially considering the ongoing human-activity-fueled climate crisis.

“They told us these data centers were going to be clean and green,” Chien told Fortune. “But in the face of AI growth, I don’t think they can be. Now is the time to hold their feet to the fire.”

More on AI energy usage: Researchers Just Found Something Extremely Alarming About AI’s Power Usage

The post OpenAI’s New Data Centers Will Draw More Power Than the Entirety of New York City, Sam Altman Says appeared first on Futurism.

🔗 Sumber: futurism.com

🤖 Catatan MAROKO133

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!