📌 MAROKO133 Hot ai: Adobe Research Unlocking Long-Term Memory in Video World Model

Video world models, which predict future frames conditioned on actions, hold immense promise for artificial intelligence, enabling agents to plan and reason in dynamic environments. Recent advancements, particularly with video diffusion models, have shown impressive capabilities in generating realistic future sequences. However, a significant bottleneck remains: maintaining long-term memory. Current models struggle to remember events and states from far in the past due to the high computational cost associated with processing extended sequences using traditional attention layers. This limits their ability to perform complex tasks requiring sustained understanding of a scene.

A new paper, “Long-Context State-Space Video World Models” by researchers from Stanford University, Princeton University, and Adobe Research, proposes an innovative solution to this challenge. They introduce a novel architecture that leverages State-Space Models (SSMs) to extend temporal memory without sacrificing computational efficiency.

The core problem lies in the quadratic computational complexity of attention mechanisms with respect to sequence length. As the video context grows, the resources required for attention layers explode, making long-term memory impractical for real-world applications. This means that after a certain number of frames, the model effectively “forgets” earlier events, hindering its performance on tasks that demand long-range coherence or reasoning over extended periods.

The authors’ key insight is to leverage the inherent strengths of State-Space Models (SSMs) for causal sequence modeling. Unlike previous attempts that retrofitted SSMs for non-causal vision tasks, this work fully exploits their advantages in processing sequences efficiently.

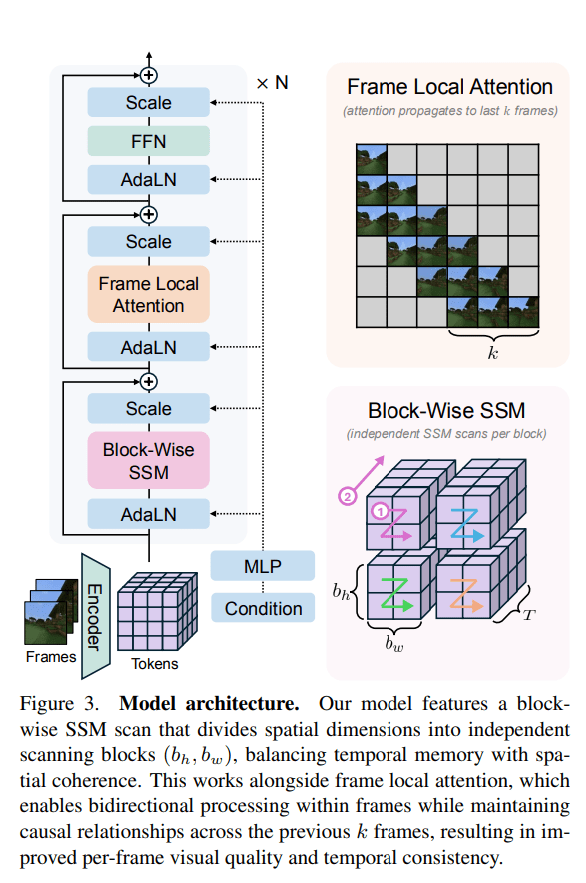

The proposed Long-Context State-Space Video World Model (LSSVWM) incorporates several crucial design choices:

- Block-wise SSM Scanning Scheme: This is central to their design. Instead of processing the entire video sequence with a single SSM scan, they employ a block-wise scheme. This strategically trades off some spatial consistency (within a block) for significantly extended temporal memory. By breaking down the long sequence into manageable blocks, they can maintain a compressed “state” that carries information across blocks, effectively extending the model’s memory horizon.

- Dense Local Attention: To compensate for the potential loss of spatial coherence introduced by the block-wise SSM scanning, the model incorporates dense local attention. This ensures that consecutive frames within and across blocks maintain strong relationships, preserving the fine-grained details and consistency necessary for realistic video generation. This dual approach of global (SSM) and local (attention) processing allows them to achieve both long-term memory and local fidelity.

The paper also introduces two key training strategies to further improve long-context performance:

- Diffusion Forcing: This technique encourages the model to generate frames conditioned on a prefix of the input, effectively forcing it to learn to maintain consistency over longer durations. By sometimes not sampling a prefix and keeping all tokens noised, the training becomes equivalent to diffusion forcing, which is highlighted as a special case of long-context training where the prefix length is zero. This pushes the model to generate coherent sequences even from minimal initial context.

- Frame Local Attention: For faster training and sampling, the authors implemented a “frame local attention” mechanism. This utilizes FlexAttention to achieve significant speedups compared to a fully causal mask. By grouping frames into chunks (e.g., chunks of 5 with a frame window size of 10), frames within a chunk maintain bidirectionality while also attending to frames in the previous chunk. This allows for an effective receptive field while optimizing computational load.

The researchers evaluated their LSSVWM on challenging datasets, including Memory Maze and Minecraft, which are specifically designed to test long-term memory capabilities through spatial retrieval and reasoning tasks.

The experiments demonstrate that their approach substantially surpasses baselines in preserving long-range memory. Qualitative results, as shown in supplementary figures (e.g., S1, S2, S3), illustrate that LSSVWM can generate more coherent and accurate sequences over extended periods compared to models relying solely on causal attention or even Mamba2 without frame local attention. For instance, on reasoning tasks for the maze dataset, their model maintains better consistency and accuracy over long horizons. Similarly, for retrieval tasks, LSSVWM shows improved ability to recall and utilize information from distant past frames. Crucially, these improvements are achieved while maintaining practical inference speeds, making the models suitable for interactive applications.

The Paper Long-Context State-Space Video World Models is on arXiv

The post Adobe Research Unlocking Long-Term Memory in Video World Models with State-Space Models first appeared on Synced.

🔗 Sumber: syncedreview.com

📌 MAROKO133 Hot ai: Doctor Reels as Son Becomes Plumber in Age of AI Edisi Jam 06:

Fear not, dreary laborer. There will always be back-breaking jobs you can do when your office ones get taken over by obsequious AI models.

For one reason or another, plumbing is the profession that AI figures keep coming back to when they assure us that there’ll still be leftovers after a AI jobs apocalypse. A new piece in the Financial Times explores how plumbing has become the “career talisman” in an age of automation, and how this grates with traditional social perceptions of these professions.

Take one doctor’s reaction to her son’s decision to become a plumber. The first in her family to go to university, she told the FT she felt “oddly guilty” about his career choice, as if she was letting down her parents who worked hard to elevate her social and academic position.

It felt like “I was somehow not paying forward,” she told the FT. “Am I the blip in my family’s more traditional working-class journey?”

It points to an interesting cultural moment. A recent Wired piece cited Anirban Basu, chief economist of construction industry trade group Associated Builders and Contractors, who described how in earlier eras, tradespeople passed their skills down to their children, but eventually started encouraging them to pursue higher education instead. Basu claimed that, as a result, construction workers with the most advanced skills are now entering retirement age. Meanwhile, a Jobber survey cited by the FT found that only seven percent of parents would prefer their kids to pursue a trade or vocation.

On the one hand, skilled trades like plumbers and electricians seem like safe bets amid uncertainty over how AI will automate white collar jobs ranging from writers, secretaries, doctors, to even the programmers building the AI systems themselves. And why shouldn’t skilled vocations be seen with the same prestige and appreciation as white collar callings?

In fact, the Wired piece reported on how demand for construction workers was being driven by the AI boom. With the rapid build out of AI data centers, there’s an increased shortage of these skilled tradespeople that can help build these advanced facilities. A McKinsey study estimated that an additional 130,000 trained electricians would be needed in the US between 2023 and 2030. Tech companies themselves are sounding the alarm, with Google last year donating an undisclosed amount to the Electrical Training Alliance. Nvidia CEO Jensen Huang, proclaimed that “if you’re an electrician, you’re a plumber, a carpenter — we’re going to need hundreds of thousands of them to build all of these factories.”

But on the other hand, there’s a certain nefarious element to powerful figures glorifying physically grueling professions that aren’t easy to get into. Kepler Ridge told the FT that he credited the money he made through plumbing for being able to buy a home. But after six years in the trade, he left to go to grad school for biology, and provided words of caution to other people who wanted to take up plumbing themselves.

“It was extremely physical,” he told the FT. I was exhausted at the end of every single day. I found myself wanting to come home and go to bed.”

What’s more, while there’s demand for these jobs, there’s no guarantee you’ll even be able to nab an apprenticeship. And you’ll be competing against everyone else trying to get in while the going’s supposed to be good, too. In northern Virginia, home to the country’s “data center alley” where construction of these facilities continues to surge, there’s no shortage of people applying to become plumbers, Chris Madello, an international representative with the United Association, told Wired.

“We always have far more people applying than we actually accept into our apprenticeship programs,” Madello added.

More on AI: AI Agent Frets That Its Job Could Be Replaced by AI

The post Doctor Reels as Son Becomes Plumber in Age of AI appeared first on Futurism.

🔗 Sumber: futurism.com

🤖 Catatan MAROKO133

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!