📌 MAROKO133 Update ai: Adobe Research Unlocking Long-Term Memory in Video World Mo

Video world models, which predict future frames conditioned on actions, hold immense promise for artificial intelligence, enabling agents to plan and reason in dynamic environments. Recent advancements, particularly with video diffusion models, have shown impressive capabilities in generating realistic future sequences. However, a significant bottleneck remains: maintaining long-term memory. Current models struggle to remember events and states from far in the past due to the high computational cost associated with processing extended sequences using traditional attention layers. This limits their ability to perform complex tasks requiring sustained understanding of a scene.

A new paper, “Long-Context State-Space Video World Models” by researchers from Stanford University, Princeton University, and Adobe Research, proposes an innovative solution to this challenge. They introduce a novel architecture that leverages State-Space Models (SSMs) to extend temporal memory without sacrificing computational efficiency.

The core problem lies in the quadratic computational complexity of attention mechanisms with respect to sequence length. As the video context grows, the resources required for attention layers explode, making long-term memory impractical for real-world applications. This means that after a certain number of frames, the model effectively “forgets” earlier events, hindering its performance on tasks that demand long-range coherence or reasoning over extended periods.

The authors’ key insight is to leverage the inherent strengths of State-Space Models (SSMs) for causal sequence modeling. Unlike previous attempts that retrofitted SSMs for non-causal vision tasks, this work fully exploits their advantages in processing sequences efficiently.

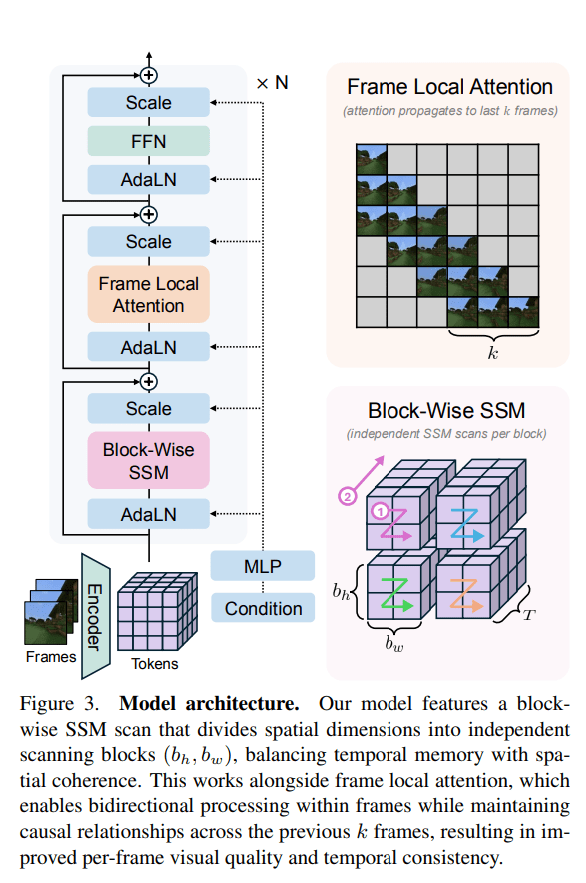

The proposed Long-Context State-Space Video World Model (LSSVWM) incorporates several crucial design choices:

- Block-wise SSM Scanning Scheme: This is central to their design. Instead of processing the entire video sequence with a single SSM scan, they employ a block-wise scheme. This strategically trades off some spatial consistency (within a block) for significantly extended temporal memory. By breaking down the long sequence into manageable blocks, they can maintain a compressed “state” that carries information across blocks, effectively extending the model’s memory horizon.

- Dense Local Attention: To compensate for the potential loss of spatial coherence introduced by the block-wise SSM scanning, the model incorporates dense local attention. This ensures that consecutive frames within and across blocks maintain strong relationships, preserving the fine-grained details and consistency necessary for realistic video generation. This dual approach of global (SSM) and local (attention) processing allows them to achieve both long-term memory and local fidelity.

The paper also introduces two key training strategies to further improve long-context performance:

- Diffusion Forcing: This technique encourages the model to generate frames conditioned on a prefix of the input, effectively forcing it to learn to maintain consistency over longer durations. By sometimes not sampling a prefix and keeping all tokens noised, the training becomes equivalent to diffusion forcing, which is highlighted as a special case of long-context training where the prefix length is zero. This pushes the model to generate coherent sequences even from minimal initial context.

- Frame Local Attention: For faster training and sampling, the authors implemented a “frame local attention” mechanism. This utilizes FlexAttention to achieve significant speedups compared to a fully causal mask. By grouping frames into chunks (e.g., chunks of 5 with a frame window size of 10), frames within a chunk maintain bidirectionality while also attending to frames in the previous chunk. This allows for an effective receptive field while optimizing computational load.

The researchers evaluated their LSSVWM on challenging datasets, including Memory Maze and Minecraft, which are specifically designed to test long-term memory capabilities through spatial retrieval and reasoning tasks.

The experiments demonstrate that their approach substantially surpasses baselines in preserving long-range memory. Qualitative results, as shown in supplementary figures (e.g., S1, S2, S3), illustrate that LSSVWM can generate more coherent and accurate sequences over extended periods compared to models relying solely on causal attention or even Mamba2 without frame local attention. For instance, on reasoning tasks for the maze dataset, their model maintains better consistency and accuracy over long horizons. Similarly, for retrieval tasks, LSSVWM shows improved ability to recall and utilize information from distant past frames. Crucially, these improvements are achieved while maintaining practical inference speeds, making the models suitable for interactive applications.

The Paper Long-Context State-Space Video World Models is on arXiv

The post Adobe Research Unlocking Long-Term Memory in Video World Models with State-Space Models first appeared on Synced.

🔗 Sumber: syncedreview.com

📌 MAROKO133 Eksklusif ai: New York’s Beloved Bodegas Are Filling Up With AI Slop H

Not even the most enduring symbol of New York city is safe from the AI onslaught. No, we’re not talking about the iconic pizza rat, or even the stable of mascots wandering Times Square like some kind of sad, brand-name purgatory. We’re talking about bodegas, the ubiquitous corner stores that first emerged in the 1950s as waves of Puerto Rican migrants began calling the city home.

If you’re not from New York, they may just seem like corner stores, and on a certain level, that’s true. But they’re a special part of the fabric of the city. Even Chicago, largely considered the USA’s second most walkable city after New York, pales in comparison to the latter’s sheer number of convenience stores.

With an estimated 13,000 bodegas across the five boroughs, it was probably only a matter of time before some of them began turning to AI slop for their in-store signage. First reported by Hell Gate, many of the city’s beloved convenience stores are really going for it, replacing their time-honored retail graphics with uncanny garbage generated by the likes of ChatGPT.

Gone are the days of human-made deli signage, the kind jam-packed with sometimes-dubious stock pics of tortas, deli meat, toilet paper, and coffee. Now is the era of slop signage, exemplified by stores like Blend and Bites in Brooklyn, whose logo features an uncanny burger, what appears to be a water-based smoothie, and cartoon berries crammed together onto one indecipherable mess of a sign.

One Imgur post captures a particularly depressing tableau: a bodega window papered with AI-generated slop, including hallucinated text like “RUSTORS” and “POTIORS.” The real nightmare fuel waits in the corner, where a woman appears to have fused her face with the store’s branding.

Hell Gate found a few beauties as well, such as a logo for “Maza Cloud Kitchens” which features a chicken wing with a belt loop and a sign for bottomless mimosas featuring waffles topped with brussels sprouts.

New Yorkers, for their part, aren’t thrilled with the new ad movement taking over their local haunts, especially at a time when human artists are struggling like never before.

“Everything looks like a tacky weed dispensary,” one netizen observed under Hell Gate’s Instagram post on the subject.

“I really don’t get why businesses don’t hire artists,” one poster commented on the r/BedStuy subreddit. “I myself would do it for free, I just would love to see actual art when walking outside rather than AI images or generic images taken from the internet.”

More on New York: Quadcopter Drone Caught Trying to Deliver Hunting Knives to Inmates in Prison

The post New York’s Beloved Bodegas Are Filling Up With AI Slop appeared first on Futurism.

🔗 Sumber: futurism.com

🤖 Catatan MAROKO133

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!