📌 MAROKO133 Update ai: Adobe Research Unlocking Long-Term Memory in Video World Mo

Video world models, which predict future frames conditioned on actions, hold immense promise for artificial intelligence, enabling agents to plan and reason in dynamic environments. Recent advancements, particularly with video diffusion models, have shown impressive capabilities in generating realistic future sequences. However, a significant bottleneck remains: maintaining long-term memory. Current models struggle to remember events and states from far in the past due to the high computational cost associated with processing extended sequences using traditional attention layers. This limits their ability to perform complex tasks requiring sustained understanding of a scene.

A new paper, “Long-Context State-Space Video World Models” by researchers from Stanford University, Princeton University, and Adobe Research, proposes an innovative solution to this challenge. They introduce a novel architecture that leverages State-Space Models (SSMs) to extend temporal memory without sacrificing computational efficiency.

The core problem lies in the quadratic computational complexity of attention mechanisms with respect to sequence length. As the video context grows, the resources required for attention layers explode, making long-term memory impractical for real-world applications. This means that after a certain number of frames, the model effectively “forgets” earlier events, hindering its performance on tasks that demand long-range coherence or reasoning over extended periods.

The authors’ key insight is to leverage the inherent strengths of State-Space Models (SSMs) for causal sequence modeling. Unlike previous attempts that retrofitted SSMs for non-causal vision tasks, this work fully exploits their advantages in processing sequences efficiently.

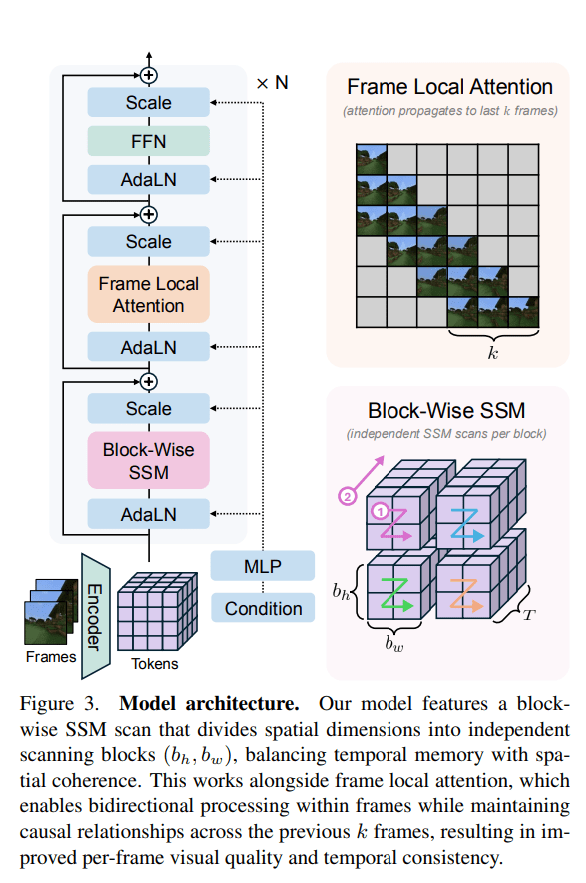

The proposed Long-Context State-Space Video World Model (LSSVWM) incorporates several crucial design choices:

- Block-wise SSM Scanning Scheme: This is central to their design. Instead of processing the entire video sequence with a single SSM scan, they employ a block-wise scheme. This strategically trades off some spatial consistency (within a block) for significantly extended temporal memory. By breaking down the long sequence into manageable blocks, they can maintain a compressed “state” that carries information across blocks, effectively extending the model’s memory horizon.

- Dense Local Attention: To compensate for the potential loss of spatial coherence introduced by the block-wise SSM scanning, the model incorporates dense local attention. This ensures that consecutive frames within and across blocks maintain strong relationships, preserving the fine-grained details and consistency necessary for realistic video generation. This dual approach of global (SSM) and local (attention) processing allows them to achieve both long-term memory and local fidelity.

The paper also introduces two key training strategies to further improve long-context performance:

- Diffusion Forcing: This technique encourages the model to generate frames conditioned on a prefix of the input, effectively forcing it to learn to maintain consistency over longer durations. By sometimes not sampling a prefix and keeping all tokens noised, the training becomes equivalent to diffusion forcing, which is highlighted as a special case of long-context training where the prefix length is zero. This pushes the model to generate coherent sequences even from minimal initial context.

- Frame Local Attention: For faster training and sampling, the authors implemented a “frame local attention” mechanism. This utilizes FlexAttention to achieve significant speedups compared to a fully causal mask. By grouping frames into chunks (e.g., chunks of 5 with a frame window size of 10), frames within a chunk maintain bidirectionality while also attending to frames in the previous chunk. This allows for an effective receptive field while optimizing computational load.

The researchers evaluated their LSSVWM on challenging datasets, including Memory Maze and Minecraft, which are specifically designed to test long-term memory capabilities through spatial retrieval and reasoning tasks.

The experiments demonstrate that their approach substantially surpasses baselines in preserving long-range memory. Qualitative results, as shown in supplementary figures (e.g., S1, S2, S3), illustrate that LSSVWM can generate more coherent and accurate sequences over extended periods compared to models relying solely on causal attention or even Mamba2 without frame local attention. For instance, on reasoning tasks for the maze dataset, their model maintains better consistency and accuracy over long horizons. Similarly, for retrieval tasks, LSSVWM shows improved ability to recall and utilize information from distant past frames. Crucially, these improvements are achieved while maintaining practical inference speeds, making the models suitable for interactive applications.

The Paper Long-Context State-Space Video World Models is on arXiv

The post Adobe Research Unlocking Long-Term Memory in Video World Models with State-Space Models first appeared on Synced.

🔗 Sumber: syncedreview.com

📌 MAROKO133 Eksklusif ai: Tech Companies Are Using Insidious Tactics to Build Data

Last month, the Seminole Nation of Oklahoma became the first Indigenous nation to officially ban data center construction from its land.

When a tech startup approached Tribal leaders asking them to sign a nondisclosure agreement along with a letter of intent to construct a data center on Seminole territory, the Tribal Council unanimously shot them down, voting 24 to 0 to instead enact a permanent data center moratorium.

The Seminole Nation isn’t alone in fighting off predatory tech firms. Across the country, data center developers are using underhanded tactics to ram their server farms onto Indigenous land, whether Native communities want them there or not.

In an interview with Democracy Now‘s Amy Goodman, activist Krystal Two Bulls, executive director of Honor the Earth — an Indigenous-led environmental organization that helped the Seminole Nation assert their rights against the unscrupulous data center startup — said there are anywhere between 103 and 160 proposed hyperscale data centers looking to build on Native lands.

One of the tactics Native tribes are increasingly seeing is the bait-and-switch, in which developers come in looking to build renewable energy infrastructure, only to swap the plan to a data center at the last minute.

“What we’re hearing from different Native nations is that corporations will come, they’ll start by talking about solar panels and installing that on their lands, and then it quickly shifts to a hyperscale data center,” Two Bulls said. “But often, before they even get to that conversation, they’re asking them to sign an NDA. And so that makes our tribal leadership accountable to them and not to the people… they’re actually supposed to represent.”

For the activists at Honor the Earth, not to mention Tribal communities themselves, that means proposed data center projects are often difficult to even identify, nevermind organize against, until they’re well underway.

“Oftentimes we don’t know that these projects are coming to our lands until we hear in a press release or on the news or we hear rumors of what’s happening,” Two Bulls explained.

Data center developers are all too happy to take advantage of rural communities throughout the US. But Two Bulls says Tribal nations make particularly appealing targets for exploitation due to their ample water and baked in tax incentives, to say nothing of the kind of economic desperation created by centuries of dispossession and neglect.

“When you are dealing with communities that often live in extreme poverty, the promise of these jobs is something that appeals to them, right?” Two Bulls said. “And then we also have the jurisdictional issues that happen on Indigenous land. So, all of those create an environment that is very conducive to these hyperscale data centers being built on Native lands.”

Against the whims of some of the most powerful corporations on earth, it seems the only solution is to get organized. Thanks in large part to efforts by organizations like Honor the Earth — which launched the No Data Centers Coalition in 2025 — politicians at all levels are starting to wake up and pass anti-data center resolutions.

In Tulsa and Oklahoma City, city officials recently voted to ban any incoming data center development until 2027. They’re temporary victories, but a testament to the kind of people power groups like Honor the Earth are harnessing to defend their homes.

“We were there in Tulsa to pass the moratorium last month, and we were there this morning in Oklahoma City to show that the community does not want these data centers,” Ash Leitka, director of Honor the Earth’s Department of Sovereignty and Self Determination told Futurism. “We want clean water. We want privacy and security. We want jobs and economic stability, all of which hyperscale data centers threaten and negatively impact. The falsely generated demand for AI and AI infrastructure truly is a death cycle.”

More on data centers: Almost Half of US Data Centers That Were Supposed to Open This Year Slated to Be Canceled or Delayed

The post Tech Companies Are Using Insidious Tactics to Build Data Centers on Indigenous Lands, Activists Say appeared first on Futurism.

🔗 Sumber: futurism.com

🤖 Catatan MAROKO133

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!