📌 MAROKO133 Hot ai: Adobe Research Unlocking Long-Term Memory in Video World Model

Video world models, which predict future frames conditioned on actions, hold immense promise for artificial intelligence, enabling agents to plan and reason in dynamic environments. Recent advancements, particularly with video diffusion models, have shown impressive capabilities in generating realistic future sequences. However, a significant bottleneck remains: maintaining long-term memory. Current models struggle to remember events and states from far in the past due to the high computational cost associated with processing extended sequences using traditional attention layers. This limits their ability to perform complex tasks requiring sustained understanding of a scene.

A new paper, “Long-Context State-Space Video World Models” by researchers from Stanford University, Princeton University, and Adobe Research, proposes an innovative solution to this challenge. They introduce a novel architecture that leverages State-Space Models (SSMs) to extend temporal memory without sacrificing computational efficiency.

The core problem lies in the quadratic computational complexity of attention mechanisms with respect to sequence length. As the video context grows, the resources required for attention layers explode, making long-term memory impractical for real-world applications. This means that after a certain number of frames, the model effectively “forgets” earlier events, hindering its performance on tasks that demand long-range coherence or reasoning over extended periods.

The authors’ key insight is to leverage the inherent strengths of State-Space Models (SSMs) for causal sequence modeling. Unlike previous attempts that retrofitted SSMs for non-causal vision tasks, this work fully exploits their advantages in processing sequences efficiently.

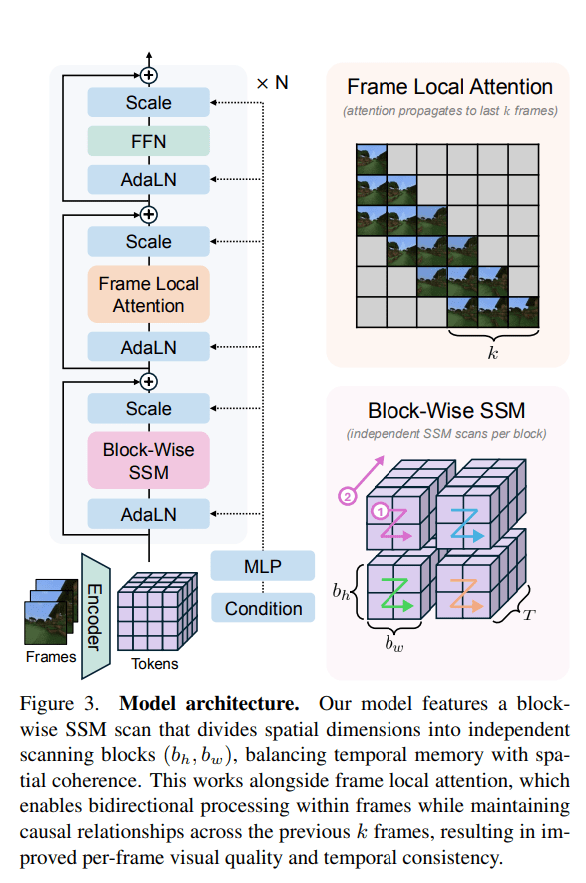

The proposed Long-Context State-Space Video World Model (LSSVWM) incorporates several crucial design choices:

- Block-wise SSM Scanning Scheme: This is central to their design. Instead of processing the entire video sequence with a single SSM scan, they employ a block-wise scheme. This strategically trades off some spatial consistency (within a block) for significantly extended temporal memory. By breaking down the long sequence into manageable blocks, they can maintain a compressed “state” that carries information across blocks, effectively extending the model’s memory horizon.

- Dense Local Attention: To compensate for the potential loss of spatial coherence introduced by the block-wise SSM scanning, the model incorporates dense local attention. This ensures that consecutive frames within and across blocks maintain strong relationships, preserving the fine-grained details and consistency necessary for realistic video generation. This dual approach of global (SSM) and local (attention) processing allows them to achieve both long-term memory and local fidelity.

The paper also introduces two key training strategies to further improve long-context performance:

- Diffusion Forcing: This technique encourages the model to generate frames conditioned on a prefix of the input, effectively forcing it to learn to maintain consistency over longer durations. By sometimes not sampling a prefix and keeping all tokens noised, the training becomes equivalent to diffusion forcing, which is highlighted as a special case of long-context training where the prefix length is zero. This pushes the model to generate coherent sequences even from minimal initial context.

- Frame Local Attention: For faster training and sampling, the authors implemented a “frame local attention” mechanism. This utilizes FlexAttention to achieve significant speedups compared to a fully causal mask. By grouping frames into chunks (e.g., chunks of 5 with a frame window size of 10), frames within a chunk maintain bidirectionality while also attending to frames in the previous chunk. This allows for an effective receptive field while optimizing computational load.

The researchers evaluated their LSSVWM on challenging datasets, including Memory Maze and Minecraft, which are specifically designed to test long-term memory capabilities through spatial retrieval and reasoning tasks.

The experiments demonstrate that their approach substantially surpasses baselines in preserving long-range memory. Qualitative results, as shown in supplementary figures (e.g., S1, S2, S3), illustrate that LSSVWM can generate more coherent and accurate sequences over extended periods compared to models relying solely on causal attention or even Mamba2 without frame local attention. For instance, on reasoning tasks for the maze dataset, their model maintains better consistency and accuracy over long horizons. Similarly, for retrieval tasks, LSSVWM shows improved ability to recall and utilize information from distant past frames. Crucially, these improvements are achieved while maintaining practical inference speeds, making the models suitable for interactive applications.

The Paper Long-Context State-Space Video World Models is on arXiv

The post Adobe Research Unlocking Long-Term Memory in Video World Models with State-Space Models first appeared on Synced.

🔗 Sumber: syncedreview.com

📌 MAROKO133 Eksklusif ai: AI Appears to Be Trapping Certain Job Applicants in a Li

For workers already enmeshed in the US workforce, AI is akin to a far-off asteroid, a looming threat that could impact all life on Earth. Our best experts can’t agree on its trajectory or even its distance from our planet, but knowledge of its presence is enough to throw society into a fervor. There’s no way to know: it could hit with such force that it lays waste to all but a chosen few, or it might miss us entirely, as gravitational forces alter the conditions of a flight path not yet written in the stars.

Then there are the masses of unemployed — maybe they’re stranded astronauts, in this tortured metaphor — for whom worrying about the asteroid with two feet on solid ground would be a luxury. For these spacefarers, the asteroid takes a back seat to the more pressing issue of returning home. Yet the asteroid is already making that increasingly impossible, its gravitational force pulling their spacecraft further from the trajectory they need to make it back Earthside.

This is the situation for disenfranchised workers across the world. As many debate whether AI will automate all jobs in service of a privileged few, others live with the reality that it’s already made entering the workforce a Herculean task. It’s not an automation story in the traditional sense, but an enshittification one, where many feel that AI as a hiring tool has made even landing a job interview a nearly unattainable fantasy.

Take for example the story of Chad Markey, a 33-year-old graduate-to-be from an Ivy league medical school. A comprehensive article by Wired broke down the ways in which Markey has been screwed by AI screening software, which seems to have very nearly destroyed his chances at being accepted into a residency program.

Having applied to 82 such programs for the 2025-2026 cycle, Markey was shocked at the number of flat-out denials coming in. He had good grades from an Ivy league school, Wired noted, as well as at least 10 published research papers to his name, and effusive letters of recommendation from his professors.

But he also had three separate leaves of absence on his record, owing to debilitating flare-ups of an autoimmune disease known as ankylosing spondylitis. Though the leaves of absence were medically necessary — which Markey explained in a letter in his applications — they were technically categorized as “voluntary.” That’s a minor detail which, if misinterpreted by a sloppy AI system, could have devastating consequences for his applications.

“I crawled out of a f**king black hole,” he told Wired. “I could not walk for six months. I’ve come this far, and this is happening?”

Meanwhile, an AI screening tool called Cortex was taking hospitals by storm. Cortex basically chews up resident application documents and spits them out onto an easy-to-read dashboard, theoretically allowing hiring personnel a broad overview of hundreds of applicants from a wide variety of medical programs. As the software’s creator Thalamus told Wired, the tool is already being used by about 1,500 medical residency programs throughout the US.

As with all AI tools, Cortex has its fair share of issues. As one editorial published in the journal Laryngoscope documented, Cortex presents “persistent errors” which have the “potential to negatively impact residency applications and programs,” particularly the fact that it was known to show inaccurate letter grades for applicants, as Wired reported.

Suspecting Cortex may also be quashing his applications due to his medically necessary leaves of absence, Markey began a months-long quest to get to the bottom of the screening software. After numerous experiments and attempts at reverse-engineering Cortex, the Ivy league student found compelling evidence that the AI tool grades applications with voluntary leaves of absence significantly lower than ones with accurate descriptions of the medical circumstances.

Of the 82 residency programs Markey applied to, only five confirmed to Wired that they were not using Cortex. This doesn’t mean the rest of them were, and Thalamus denied that it algorithmically scored or ranked any applicants for the 2025-2026 residency cycle. But the smoking guns came when Markey began cold-emailing residency administrators, resulting in 10 excited offers from prestigious hospitals that the AI screening tool had failed to deliver (he’s currently set to start at Columbia University’s psychiatry program at New York Presbyterian Hospital in July.)

Markey’s odyssey underscores the horrifying consequences these tools can have at scale and the lack of transparency they engender. Had Markey been a desperate single mother applying to housekeeping jobs or an elderly worker laid-off a year before retirement, there’d be little chance of ever reverse engineering any hiring tools, let alone landing a job via cold email. For the worker, the result is a kind of black box reality where AI renders verdicts they may never understand, if they know about them at all.

More on AI and labor: You’ll Never Guess Trade Unions’ Position on AI Data Centers

The post AI Appears to Be Trapping Certain Job Applicants in a Limbo Where They Never Get an Interview for “Reasons” That Are Completely Unfair appeared first on Futurism.

🔗 Sumber: futurism.com

🤖 Catatan MAROKO133

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!