📌 MAROKO133 Update ai: Adobe Research Unlocking Long-Term Memory in Video World Mo

Video world models, which predict future frames conditioned on actions, hold immense promise for artificial intelligence, enabling agents to plan and reason in dynamic environments. Recent advancements, particularly with video diffusion models, have shown impressive capabilities in generating realistic future sequences. However, a significant bottleneck remains: maintaining long-term memory. Current models struggle to remember events and states from far in the past due to the high computational cost associated with processing extended sequences using traditional attention layers. This limits their ability to perform complex tasks requiring sustained understanding of a scene.

A new paper, “Long-Context State-Space Video World Models” by researchers from Stanford University, Princeton University, and Adobe Research, proposes an innovative solution to this challenge. They introduce a novel architecture that leverages State-Space Models (SSMs) to extend temporal memory without sacrificing computational efficiency.

The core problem lies in the quadratic computational complexity of attention mechanisms with respect to sequence length. As the video context grows, the resources required for attention layers explode, making long-term memory impractical for real-world applications. This means that after a certain number of frames, the model effectively “forgets” earlier events, hindering its performance on tasks that demand long-range coherence or reasoning over extended periods.

The authors’ key insight is to leverage the inherent strengths of State-Space Models (SSMs) for causal sequence modeling. Unlike previous attempts that retrofitted SSMs for non-causal vision tasks, this work fully exploits their advantages in processing sequences efficiently.

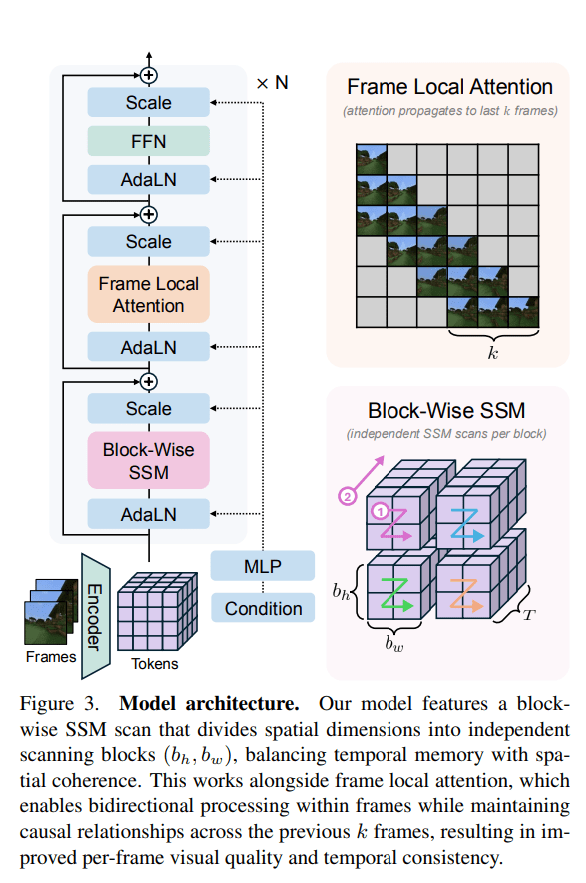

The proposed Long-Context State-Space Video World Model (LSSVWM) incorporates several crucial design choices:

- Block-wise SSM Scanning Scheme: This is central to their design. Instead of processing the entire video sequence with a single SSM scan, they employ a block-wise scheme. This strategically trades off some spatial consistency (within a block) for significantly extended temporal memory. By breaking down the long sequence into manageable blocks, they can maintain a compressed “state” that carries information across blocks, effectively extending the model’s memory horizon.

- Dense Local Attention: To compensate for the potential loss of spatial coherence introduced by the block-wise SSM scanning, the model incorporates dense local attention. This ensures that consecutive frames within and across blocks maintain strong relationships, preserving the fine-grained details and consistency necessary for realistic video generation. This dual approach of global (SSM) and local (attention) processing allows them to achieve both long-term memory and local fidelity.

The paper also introduces two key training strategies to further improve long-context performance:

- Diffusion Forcing: This technique encourages the model to generate frames conditioned on a prefix of the input, effectively forcing it to learn to maintain consistency over longer durations. By sometimes not sampling a prefix and keeping all tokens noised, the training becomes equivalent to diffusion forcing, which is highlighted as a special case of long-context training where the prefix length is zero. This pushes the model to generate coherent sequences even from minimal initial context.

- Frame Local Attention: For faster training and sampling, the authors implemented a “frame local attention” mechanism. This utilizes FlexAttention to achieve significant speedups compared to a fully causal mask. By grouping frames into chunks (e.g., chunks of 5 with a frame window size of 10), frames within a chunk maintain bidirectionality while also attending to frames in the previous chunk. This allows for an effective receptive field while optimizing computational load.

The researchers evaluated their LSSVWM on challenging datasets, including Memory Maze and Minecraft, which are specifically designed to test long-term memory capabilities through spatial retrieval and reasoning tasks.

The experiments demonstrate that their approach substantially surpasses baselines in preserving long-range memory. Qualitative results, as shown in supplementary figures (e.g., S1, S2, S3), illustrate that LSSVWM can generate more coherent and accurate sequences over extended periods compared to models relying solely on causal attention or even Mamba2 without frame local attention. For instance, on reasoning tasks for the maze dataset, their model maintains better consistency and accuracy over long horizons. Similarly, for retrieval tasks, LSSVWM shows improved ability to recall and utilize information from distant past frames. Crucially, these improvements are achieved while maintaining practical inference speeds, making the models suitable for interactive applications.

The Paper Long-Context State-Space Video World Models is on arXiv

The post Adobe Research Unlocking Long-Term Memory in Video World Models with State-Space Models first appeared on Synced.

🔗 Sumber: syncedreview.com

📌 MAROKO133 Eksklusif ai: AMD secures massive 6-gigawatt GPU deal with OpenAI to p

OpenAI has signed a multi-year, multi-generational deal with Advanced Micro Devices (AMD) to deploy up to 6 gigawatts of AMD Instinct GPUs. The partnership marks one of the largest GPU deployment agreements in AI history and could see OpenAI take a 10% stake in the chipmaker.

AMD stock surged 23.71% on Monday following the announcement.

The deal begins with a 1-gigawatt rollout of AMD’s upcoming Instinct MI450 GPUs in the second half of 2026.

The deployment will scale to 6 gigawatts over multiple generations of hardware, powering OpenAI’s next wave of models and services.

AMD and OpenAI described the arrangement as a definitive agreement to deepen their long-term collaboration, which began with the MI300X and continued with the MI350X.

The two companies will share technical expertise to align their product roadmaps and jointly advance AI hardware and software.

“We are thrilled to partner with OpenAI to deliver AI compute at massive scale,” said Dr. Lisa Su, chair and CEO, AMD. “This partnership brings the best of AMD and OpenAI together to create a true win-win enabling the world’s most ambitious AI buildout and advancing the entire AI ecosystem.”

OpenAI CEO Sam Altman said the agreement was “a major step in building the compute capacity needed to realize AI’s full potential.” He added that AMD’s leadership in high-performance chips would help accelerate AI progress and bring its benefits to more people faster.

Equity tie-up and vesting milestones

As part of the partnership, AMD has issued OpenAI a warrant for up to 160 million shares of AMD common stock.

The warrant will vest in tranches tied to deployment milestones and AMD’s share price. The first tranche unlocks with the initial 1-gigawatt rollout, with subsequent tranches tied to the full 6-gigawatt scale-up.

If OpenAI exercises the full warrant, it could acquire roughly 10% of AMD, based on current shares outstanding. The companies declined to disclose the dollar value of the deal but confirmed it spans several billion dollars.

Jean Hu, AMD’s executive vice president and CFO, said the agreement is expected to generate “tens of billions of dollars in revenue for AMD” and be highly accretive to its non-GAAP earnings per share.

Reshaping of AI’s supply chain

OpenAI President Greg Brockman told CNBC the deal was essential to scale AI globally. “We have to do this,” he said. “This is so core to our mission if we really want to be able to scale to reach all of humanity, this is what we have to do.”

He added that OpenAI currently cannot launch several features in ChatGPT and other products due to limited compute capacity.

The partnership also diversifies OpenAI’s chip supply, easing dependence on a single vendor.

Two weeks earlier, OpenAI unveiled a $100 billion equity-and-supply agreement with NVIDIA for a dedicated 10-gigawatt portion of its 23-gigawatt AI roadmap. Together, the Nvidia and AMD partnerships represent roughly $1 trillion in new buildout commitments.

AMD CEO Lisa Su told CNBC that AI’s growth will span the next decade. “You need partnerships like this that really bring the ecosystem together to ensure that we can really get the best technologies out there,” she said.

OpenAI is also in talks with Broadcom to design custom chips for future models.

With AMD, Nvidia, Oracle, and others playing key roles, AI’s corporate economy is becoming increasingly circular – one where capital, equity, and compute flow among the same few companies powering the next generation of artificial intelligence.

🔗 Sumber: interestingengineering.com

🤖 Catatan MAROKO133

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!