📌 MAROKO133 Breaking ai: Simple, eco-friendly method turns world’s toughest plasti

A simple, eco-friendly method has been developed to recycle one of the world’s most durable plastics into useful chemical components.

Developed by researchers from Newcastle University and the University of Birmingham, the energy-efficient method breaks down Teflon (Polytetrafluoroethylene or PTFE).

Known primarily for non-stick coatings, Teflon is a material valued for its exceptional chemical and thermal stability.

The material’s strong resistance properties are a double-edged sword, making recycling difficult.

The new work shows that the Teflon waste can now be broken down using a clean process that requires only sodium metal and mechanical movement.

Interestingly, this simple process occurs at room temperature and avoids using toxic solvents.

“Hundreds of thousands of tonnes of Teflon® are produced globally each year – it’s used in everything from lubricants to coatings on cookware, and currently there are very few ways to get rid of it,” said Dr Roly Armstrong, Lecturer in Chemistry at Newcastle University and corresponding author.

“As those products come to the end of their lives, they currently end up in landfill – but this process allows us to extract the fluorine and upcycle it into useful new materials.”

Shaking-based method

Fluorine is used in about one-third of all new medicines and many advanced materials. However, this vital element is typically sourced from energy-intensive and polluting mining.

The new recycling method changes this by showing it’s possible to recover and reuse fluorine directly from Teflon waste.

When traditional disposal methods, such as incineration, are used for PTFE (Teflon), the plastic releases forever chemicals, which remain in the environment for decades.

Due to these pollutants, the existing disposal methods raise environmental and health concerns.

In this new work, the team turned to mechanochemistry, a green chemistry approach that uses mechanical energy (like shaking) to drive chemical reactions instead of high heat.

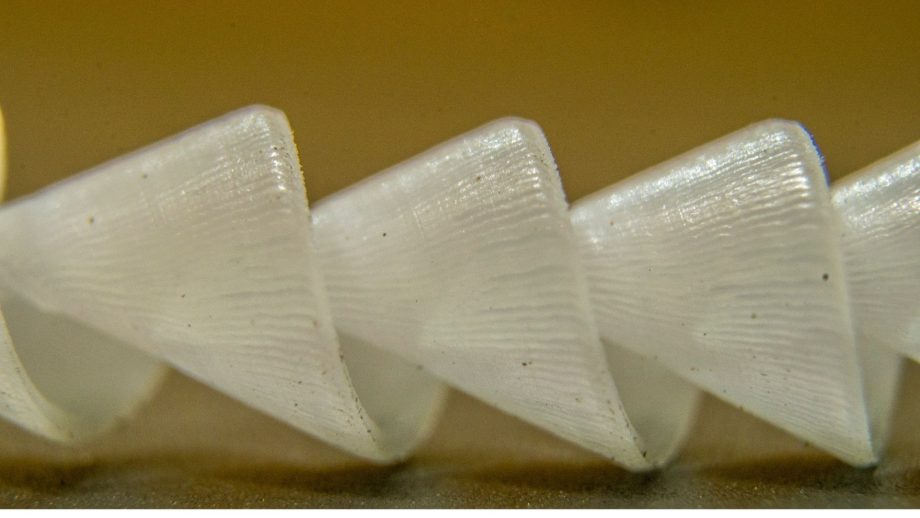

Using a ball mill (a sealed steel container), sodium metal is ground with Teflon, triggering a room-temperature reaction.

This reaction breaks the strong carbon–fluorine bonds, yielding harmless carbon and sodium fluoride (a stable salt used in toothpaste).

Furthermore, the recovered sodium fluoride can be used directly, without purification, to create valuable fluorine-containing molecules in pharmaceuticals and other fine chemicals.

Circular economy for fluorine

The team at Birmingham further investigated the reaction mixture at the atomic level using their specialized technique: solid-state Nuclear Magnetic Resonance (NMR) spectroscopy.

“This allowed us to prove that the process produces clean sodium fluoride without any by-products. It’s a perfect example of how state-of-the-art materials characterization can accelerate progress toward sustainability,” said Dr Dominik Kubicki, Associate Professor at Birmingham.

The finding shows that Teflon recycling could create a circular economy for fluorine.

“Our approach is simple, fast, and uses inexpensive materials,” said Dr Erli Lu, Associate Professor from the University of Birmingham.

“We hope it will inspire further work on reusing other kinds of fluorinated waste and help make the production of vital fluorine-containing compounds more sustainable,” Lu concluded.

This approach could reduce pollution by minimizing the environmental footprint of fluorine chemicals used in medicine, electronics, and green energy.

The findings were published in the Journal of the American Chemical Society (JACS).

🔗 Sumber: interestingengineering.com

📌 MAROKO133 Update ai: Adobe Research Unlocking Long-Term Memory in Video World Mo

Video world models, which predict future frames conditioned on actions, hold immense promise for artificial intelligence, enabling agents to plan and reason in dynamic environments. Recent advancements, particularly with video diffusion models, have shown impressive capabilities in generating realistic future sequences. However, a significant bottleneck remains: maintaining long-term memory. Current models struggle to remember events and states from far in the past due to the high computational cost associated with processing extended sequences using traditional attention layers. This limits their ability to perform complex tasks requiring sustained understanding of a scene.

A new paper, “Long-Context State-Space Video World Models” by researchers from Stanford University, Princeton University, and Adobe Research, proposes an innovative solution to this challenge. They introduce a novel architecture that leverages State-Space Models (SSMs) to extend temporal memory without sacrificing computational efficiency.

The core problem lies in the quadratic computational complexity of attention mechanisms with respect to sequence length. As the video context grows, the resources required for attention layers explode, making long-term memory impractical for real-world applications. This means that after a certain number of frames, the model effectively “forgets” earlier events, hindering its performance on tasks that demand long-range coherence or reasoning over extended periods.

The authors’ key insight is to leverage the inherent strengths of State-Space Models (SSMs) for causal sequence modeling. Unlike previous attempts that retrofitted SSMs for non-causal vision tasks, this work fully exploits their advantages in processing sequences efficiently.

The proposed Long-Context State-Space Video World Model (LSSVWM) incorporates several crucial design choices:

- Block-wise SSM Scanning Scheme: This is central to their design. Instead of processing the entire video sequence with a single SSM scan, they employ a block-wise scheme. This strategically trades off some spatial consistency (within a block) for significantly extended temporal memory. By breaking down the long sequence into manageable blocks, they can maintain a compressed “state” that carries information across blocks, effectively extending the model’s memory horizon.

- Dense Local Attention: To compensate for the potential loss of spatial coherence introduced by the block-wise SSM scanning, the model incorporates dense local attention. This ensures that consecutive frames within and across blocks maintain strong relationships, preserving the fine-grained details and consistency necessary for realistic video generation. This dual approach of global (SSM) and local (attention) processing allows them to achieve both long-term memory and local fidelity.

The paper also introduces two key training strategies to further improve long-context performance:

- Diffusion Forcing: This technique encourages the model to generate frames conditioned on a prefix of the input, effectively forcing it to learn to maintain consistency over longer durations. By sometimes not sampling a prefix and keeping all tokens noised, the training becomes equivalent to diffusion forcing, which is highlighted as a special case of long-context training where the prefix length is zero. This pushes the model to generate coherent sequences even from minimal initial context.

- Frame Local Attention: For faster training and sampling, the authors implemented a “frame local attention” mechanism. This utilizes FlexAttention to achieve significant speedups compared to a fully causal mask. By grouping frames into chunks (e.g., chunks of 5 with a frame window size of 10), frames within a chunk maintain bidirectionality while also attending to frames in the previous chunk. This allows for an effective receptive field while optimizing computational load.

The researchers evaluated their LSSVWM on challenging datasets, including Memory Maze and Minecraft, which are specifically designed to test long-term memory capabilities through spatial retrieval and reasoning tasks.

The experiments demonstrate that their approach substantially surpasses baselines in preserving long-range memory. Qualitative results, as shown in supplementary figures (e.g., S1, S2, S3), illustrate that LSSVWM can generate more coherent and accurate sequences over extended periods compared to models relying solely on causal attention or even Mamba2 without frame local attention. For instance, on reasoning tasks for the maze dataset, their model maintains better consistency and accuracy over long horizons. Similarly, for retrieval tasks, LSSVWM shows improved ability to recall and utilize information from distant past frames. Crucially, these improvements are achieved while maintaining practical inference speeds, making the models suitable for interactive applications.

The Paper Long-Context State-Space Video World Models is on arXiv

The post Adobe Research Unlocking Long-Term Memory in Video World Models with State-Space Models first appeared on Synced.

🔗 Sumber: syncedreview.com

🤖 Catatan MAROKO133

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!