📌 MAROKO133 Eksklusif ai: Adobe Research Unlocking Long-Term Memory in Video World

Video world models, which predict future frames conditioned on actions, hold immense promise for artificial intelligence, enabling agents to plan and reason in dynamic environments. Recent advancements, particularly with video diffusion models, have shown impressive capabilities in generating realistic future sequences. However, a significant bottleneck remains: maintaining long-term memory. Current models struggle to remember events and states from far in the past due to the high computational cost associated with processing extended sequences using traditional attention layers. This limits their ability to perform complex tasks requiring sustained understanding of a scene.

A new paper, “Long-Context State-Space Video World Models” by researchers from Stanford University, Princeton University, and Adobe Research, proposes an innovative solution to this challenge. They introduce a novel architecture that leverages State-Space Models (SSMs) to extend temporal memory without sacrificing computational efficiency.

The core problem lies in the quadratic computational complexity of attention mechanisms with respect to sequence length. As the video context grows, the resources required for attention layers explode, making long-term memory impractical for real-world applications. This means that after a certain number of frames, the model effectively “forgets” earlier events, hindering its performance on tasks that demand long-range coherence or reasoning over extended periods.

The authors’ key insight is to leverage the inherent strengths of State-Space Models (SSMs) for causal sequence modeling. Unlike previous attempts that retrofitted SSMs for non-causal vision tasks, this work fully exploits their advantages in processing sequences efficiently.

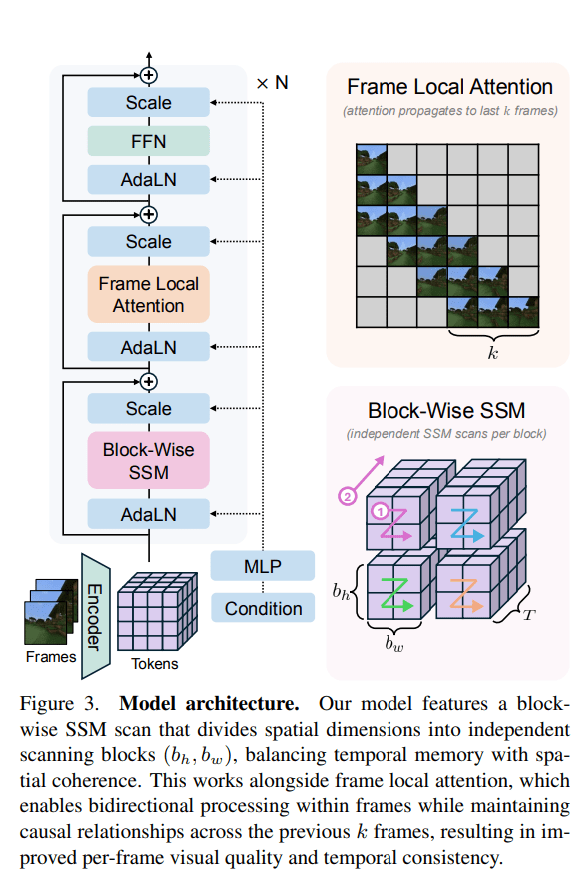

The proposed Long-Context State-Space Video World Model (LSSVWM) incorporates several crucial design choices:

- Block-wise SSM Scanning Scheme: This is central to their design. Instead of processing the entire video sequence with a single SSM scan, they employ a block-wise scheme. This strategically trades off some spatial consistency (within a block) for significantly extended temporal memory. By breaking down the long sequence into manageable blocks, they can maintain a compressed “state” that carries information across blocks, effectively extending the model’s memory horizon.

- Dense Local Attention: To compensate for the potential loss of spatial coherence introduced by the block-wise SSM scanning, the model incorporates dense local attention. This ensures that consecutive frames within and across blocks maintain strong relationships, preserving the fine-grained details and consistency necessary for realistic video generation. This dual approach of global (SSM) and local (attention) processing allows them to achieve both long-term memory and local fidelity.

The paper also introduces two key training strategies to further improve long-context performance:

- Diffusion Forcing: This technique encourages the model to generate frames conditioned on a prefix of the input, effectively forcing it to learn to maintain consistency over longer durations. By sometimes not sampling a prefix and keeping all tokens noised, the training becomes equivalent to diffusion forcing, which is highlighted as a special case of long-context training where the prefix length is zero. This pushes the model to generate coherent sequences even from minimal initial context.

- Frame Local Attention: For faster training and sampling, the authors implemented a “frame local attention” mechanism. This utilizes FlexAttention to achieve significant speedups compared to a fully causal mask. By grouping frames into chunks (e.g., chunks of 5 with a frame window size of 10), frames within a chunk maintain bidirectionality while also attending to frames in the previous chunk. This allows for an effective receptive field while optimizing computational load.

The researchers evaluated their LSSVWM on challenging datasets, including Memory Maze and Minecraft, which are specifically designed to test long-term memory capabilities through spatial retrieval and reasoning tasks.

The experiments demonstrate that their approach substantially surpasses baselines in preserving long-range memory. Qualitative results, as shown in supplementary figures (e.g., S1, S2, S3), illustrate that LSSVWM can generate more coherent and accurate sequences over extended periods compared to models relying solely on causal attention or even Mamba2 without frame local attention. For instance, on reasoning tasks for the maze dataset, their model maintains better consistency and accuracy over long horizons. Similarly, for retrieval tasks, LSSVWM shows improved ability to recall and utilize information from distant past frames. Crucially, these improvements are achieved while maintaining practical inference speeds, making the models suitable for interactive applications.

The Paper Long-Context State-Space Video World Models is on arXiv

The post Adobe Research Unlocking Long-Term Memory in Video World Models with State-Space Models first appeared on Synced.

🔗 Sumber: syncedreview.com

📌 MAROKO133 Update ai: World’s first foldable steering wheel retracts when the car

Autoliv and Tensor have jointly developed what they call the world’s first foldable steering wheel designed for a production-ready autonomous vehicle.

The system will debut in the Tensor Robocar, a Level 4-capable personal autonomous vehicle expected to be ready for volume production in the second half of 2026.

The foldable steering wheel is designed to operate in two modes.

It functions like a conventional steering wheel during manual driving, but retracts completely during autonomous operation.

The companies say the design addresses a growing challenge in autonomous vehicle interiors, where traditional steering systems become unnecessary and restrict cabin space.

As vehicles move toward higher levels of automation, interior layouts are being rethought. Steering wheels, pedals, and dashboards designed for constant human control can limit comfort and flexibility when the vehicle is driving itself.

Autoliv and Tensor say their co-developed system is intended to remove those constraints without compromising safety.

The steering wheel is integrated directly with the Tensor Robocar autonomous driving system.

When the vehicle switches to Level 4 mode, where it can handle all driving tasks within defined conditions, the steering wheel retracts fully, clearing the driver’s area and allowing the cabin to be used more freely.

Steering wheel disappears entirely

The retractable design allows the front cabin to transform into a more open space during autonomous driving.

Tensor says this opens the door for new seating positions, improved legroom, and a more lounge-like experience when manual control is not required.

Safety systems adapt automatically based on the driving mode. When the steering wheel is retracted, the vehicle activates a passenger airbag integrated into the instrument panel.

When manual driving is selected, the airbag housed within the steering wheel is used instead. Autoliv says both configurations deliver the same level of protection.

“Automotive safety can no longer follow a one-size-fits-all philosophy. We asked ourselves how to make safety intelligent and adaptive-creating a system that seamlessly aligns with the driver’s needs,” said Fabien Dumont, Executive Vice President & Chief Technology Officer of Autoliv.

“Our collaboration with Tensor delivers precisely that: a steering solution that enhances both safety and comfort by adapting to the vehicle’s mode.”

Autoliv says the system reflects a broader shift in how safety components are designed for autonomous vehicles.

Rather than focusing only on crash performance, safety systems now need to adjust dynamically to changing vehicle states and user behavior.

Built for real production

Tensor says the foldable steering wheel moves beyond concept-car experimentation. The company plans to deploy the system in a production vehicle aimed at private ownership, rather than limited pilot programs or shared fleets.

“Fully self-driving technology provides a groundbreaking user experience, but manual driving in certain scenarios is still desired by many people. Our dual-mode approach with a foldable steering wheel combines the best of both worlds and gives customers the freedom to choose,” said Jay Xiao, CEO of Tensor.

“Foldable steering wheels previously existed only in concept cars—now we are bringing this innovation to volume-production vehicles for everyday use.”

The Tensor Robocar will support L0 through L4 autonomy, allowing drivers to switch between full manual control and autonomous operation as needed. The vehicle will be offered in the US, EU, and Middle East markets.

Autoliv says the collaboration underscores how safety suppliers are expanding their role in shaping vehicle interiors for an autonomous future, where hardware must adapt as seamlessly as the software controlling the car.

🔗 Sumber: interestingengineering.com

🤖 Catatan MAROKO133

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!