📌 MAROKO133 Hot ai: Adobe Research Unlocking Long-Term Memory in Video World Model

Video world models, which predict future frames conditioned on actions, hold immense promise for artificial intelligence, enabling agents to plan and reason in dynamic environments. Recent advancements, particularly with video diffusion models, have shown impressive capabilities in generating realistic future sequences. However, a significant bottleneck remains: maintaining long-term memory. Current models struggle to remember events and states from far in the past due to the high computational cost associated with processing extended sequences using traditional attention layers. This limits their ability to perform complex tasks requiring sustained understanding of a scene.

A new paper, “Long-Context State-Space Video World Models” by researchers from Stanford University, Princeton University, and Adobe Research, proposes an innovative solution to this challenge. They introduce a novel architecture that leverages State-Space Models (SSMs) to extend temporal memory without sacrificing computational efficiency.

The core problem lies in the quadratic computational complexity of attention mechanisms with respect to sequence length. As the video context grows, the resources required for attention layers explode, making long-term memory impractical for real-world applications. This means that after a certain number of frames, the model effectively “forgets” earlier events, hindering its performance on tasks that demand long-range coherence or reasoning over extended periods.

The authors’ key insight is to leverage the inherent strengths of State-Space Models (SSMs) for causal sequence modeling. Unlike previous attempts that retrofitted SSMs for non-causal vision tasks, this work fully exploits their advantages in processing sequences efficiently.

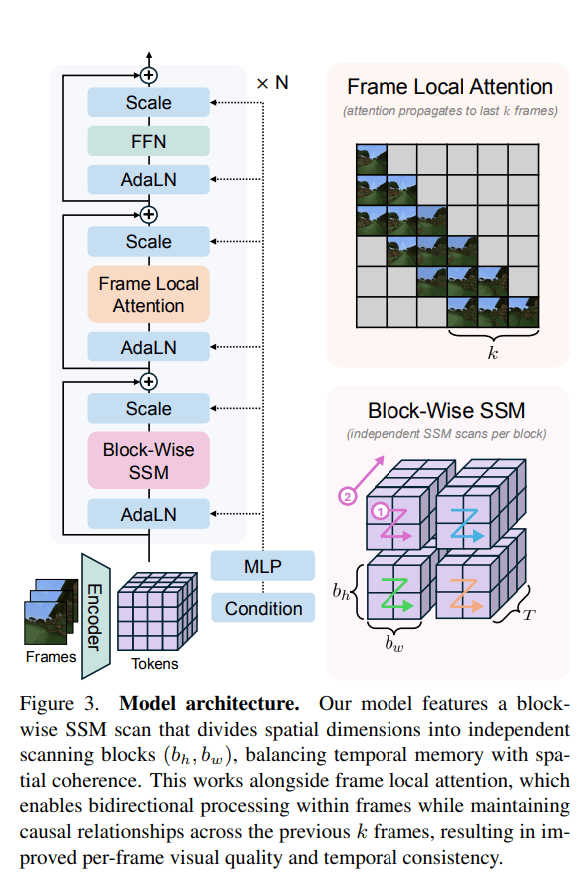

The proposed Long-Context State-Space Video World Model (LSSVWM) incorporates several crucial design choices:

- Block-wise SSM Scanning Scheme: This is central to their design. Instead of processing the entire video sequence with a single SSM scan, they employ a block-wise scheme. This strategically trades off some spatial consistency (within a block) for significantly extended temporal memory. By breaking down the long sequence into manageable blocks, they can maintain a compressed “state” that carries information across blocks, effectively extending the model’s memory horizon.

- Dense Local Attention: To compensate for the potential loss of spatial coherence introduced by the block-wise SSM scanning, the model incorporates dense local attention. This ensures that consecutive frames within and across blocks maintain strong relationships, preserving the fine-grained details and consistency necessary for realistic video generation. This dual approach of global (SSM) and local (attention) processing allows them to achieve both long-term memory and local fidelity.

The paper also introduces two key training strategies to further improve long-context performance:

- Diffusion Forcing: This technique encourages the model to generate frames conditioned on a prefix of the input, effectively forcing it to learn to maintain consistency over longer durations. By sometimes not sampling a prefix and keeping all tokens noised, the training becomes equivalent to diffusion forcing, which is highlighted as a special case of long-context training where the prefix length is zero. This pushes the model to generate coherent sequences even from minimal initial context.

- Frame Local Attention: For faster training and sampling, the authors implemented a “frame local attention” mechanism. This utilizes FlexAttention to achieve significant speedups compared to a fully causal mask. By grouping frames into chunks (e.g., chunks of 5 with a frame window size of 10), frames within a chunk maintain bidirectionality while also attending to frames in the previous chunk. This allows for an effective receptive field while optimizing computational load.

The researchers evaluated their LSSVWM on challenging datasets, including Memory Maze and Minecraft, which are specifically designed to test long-term memory capabilities through spatial retrieval and reasoning tasks.

The experiments demonstrate that their approach substantially surpasses baselines in preserving long-range memory. Qualitative results, as shown in supplementary figures (e.g., S1, S2, S3), illustrate that LSSVWM can generate more coherent and accurate sequences over extended periods compared to models relying solely on causal attention or even Mamba2 without frame local attention. For instance, on reasoning tasks for the maze dataset, their model maintains better consistency and accuracy over long horizons. Similarly, for retrieval tasks, LSSVWM shows improved ability to recall and utilize information from distant past frames. Crucially, these improvements are achieved while maintaining practical inference speeds, making the models suitable for interactive applications.

The Paper Long-Context State-Space Video World Models is on arXiv

The post Adobe Research Unlocking Long-Term Memory in Video World Models with State-Space Models first appeared on Synced.

🔗 Sumber: syncedreview.com

📌 MAROKO133 Eksklusif ai: New Law Would Let Grok Victims Sue Creeps Who Generated

On Tuesday, the US senate passed a new law that would allow victims to sue individuals who use AI models like Grok to generate non-consensual nudes and other sexually explicit images.

Dubbed the Disrupt Explicit Forged Images and Non-Consensual Edits (DEFIANCE) Act, it expands on another law passed last year, the Take It Down Act, which made it illegal to distribute nonconsensual intimate images and required social media companies to remove them within 48 hours, by empowering victims to go after the people responsible for generating the images, including seeking damages and imposing restraining orders, Bloomberg noted.

The new law was put forth by Senator Dick Durbin (D-Ill) and passed unanimously.

“Give to the victims their day in court to hold those responsible who continue to publish these images at their expense,” Durbin said in a speech on the Senate floor, via The Hill. “Today, we are one step closer to making this a reality.”

The bill comes as Elon Musk’s X is facing vociferous public backlash after his AI chatbot Grok was used to generate thousands of nudes and sexually explicit images of both adults and children whose photos had been posted to the platform. The volume of these images was so overwhelming that the AI content analysis firm Copyleaks estimated the bot was generating a nonconsensually sexualized image every single minute.

The lack of response from xAI, the Musk-owned AI startup that develops Grok, has only further catalyzed the outrage from the public and regulators alike, to say nothing of Musk’s blasé attitude to it all. He only indirectly addressed the pornographic generations without ever explicitly mentioning them by asserting in a post that “anyone using Grok to make illegal content will suffer the same consequences as if they upload illegal content.” He also joked that the nonconsensual undressing “trend” was “way funnier” than the trends started by other AI chatbots.

If Musk has failed to comprehend the gravity of the situation, governments have not. Some countries, including Malaysia and Indonesia, have moved to ban access to his website entirely. UK prime minister Keir Starmer warned that he would bring the hammer down on X while the country’s communications regulator, Ofcom, launched an official investigation into the company.

“Imagine losing control of your own likeness or identity,” said Durbin, per The Hill. “Imagine that happening to you when you were in high school. Imagine how powerless victims feel when they cannot remove illicit content, cannot prevent it from being reproduced repeatedly and cannot prevent new images from being created.”

“The consequences can be profound,” he added.

The DEFIANCE Act now needs to pass a vote in the House before it can officially become law. It had already passed a vote in the Senate when it was previously proposed in 2024, but didn’t pass the lower chamber. Now, with the outrage over Grok, it may stand a better chance.

More on AI: Opposition to Elon Musk’s AI Stripping Clothing Off Children Is Nearly Universal, Polling Shows

The post New Law Would Let Grok Victims Sue Creeps Who Generated Nonconsensual Nudes appeared first on Futurism.

🔗 Sumber: futurism.com

🤖 Catatan MAROKO133

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!