📌 MAROKO133 Eksklusif ai: Adobe Research Unlocking Long-Term Memory in Video World

Video world models, which predict future frames conditioned on actions, hold immense promise for artificial intelligence, enabling agents to plan and reason in dynamic environments. Recent advancements, particularly with video diffusion models, have shown impressive capabilities in generating realistic future sequences. However, a significant bottleneck remains: maintaining long-term memory. Current models struggle to remember events and states from far in the past due to the high computational cost associated with processing extended sequences using traditional attention layers. This limits their ability to perform complex tasks requiring sustained understanding of a scene.

A new paper, “Long-Context State-Space Video World Models” by researchers from Stanford University, Princeton University, and Adobe Research, proposes an innovative solution to this challenge. They introduce a novel architecture that leverages State-Space Models (SSMs) to extend temporal memory without sacrificing computational efficiency.

The core problem lies in the quadratic computational complexity of attention mechanisms with respect to sequence length. As the video context grows, the resources required for attention layers explode, making long-term memory impractical for real-world applications. This means that after a certain number of frames, the model effectively “forgets” earlier events, hindering its performance on tasks that demand long-range coherence or reasoning over extended periods.

The authors’ key insight is to leverage the inherent strengths of State-Space Models (SSMs) for causal sequence modeling. Unlike previous attempts that retrofitted SSMs for non-causal vision tasks, this work fully exploits their advantages in processing sequences efficiently.

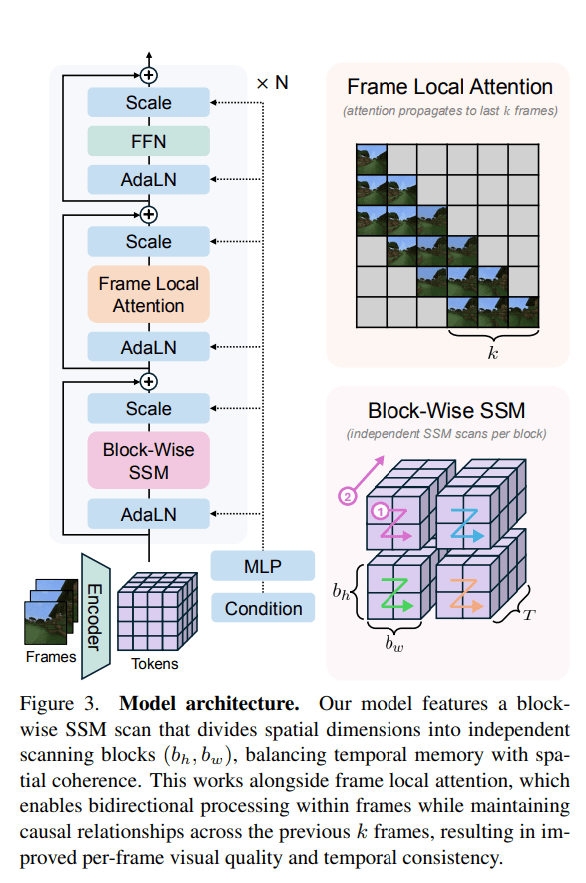

The proposed Long-Context State-Space Video World Model (LSSVWM) incorporates several crucial design choices:

- Block-wise SSM Scanning Scheme: This is central to their design. Instead of processing the entire video sequence with a single SSM scan, they employ a block-wise scheme. This strategically trades off some spatial consistency (within a block) for significantly extended temporal memory. By breaking down the long sequence into manageable blocks, they can maintain a compressed “state” that carries information across blocks, effectively extending the model’s memory horizon.

- Dense Local Attention: To compensate for the potential loss of spatial coherence introduced by the block-wise SSM scanning, the model incorporates dense local attention. This ensures that consecutive frames within and across blocks maintain strong relationships, preserving the fine-grained details and consistency necessary for realistic video generation. This dual approach of global (SSM) and local (attention) processing allows them to achieve both long-term memory and local fidelity.

The paper also introduces two key training strategies to further improve long-context performance:

- Diffusion Forcing: This technique encourages the model to generate frames conditioned on a prefix of the input, effectively forcing it to learn to maintain consistency over longer durations. By sometimes not sampling a prefix and keeping all tokens noised, the training becomes equivalent to diffusion forcing, which is highlighted as a special case of long-context training where the prefix length is zero. This pushes the model to generate coherent sequences even from minimal initial context.

- Frame Local Attention: For faster training and sampling, the authors implemented a “frame local attention” mechanism. This utilizes FlexAttention to achieve significant speedups compared to a fully causal mask. By grouping frames into chunks (e.g., chunks of 5 with a frame window size of 10), frames within a chunk maintain bidirectionality while also attending to frames in the previous chunk. This allows for an effective receptive field while optimizing computational load.

The researchers evaluated their LSSVWM on challenging datasets, including Memory Maze and Minecraft, which are specifically designed to test long-term memory capabilities through spatial retrieval and reasoning tasks.

The experiments demonstrate that their approach substantially surpasses baselines in preserving long-range memory. Qualitative results, as shown in supplementary figures (e.g., S1, S2, S3), illustrate that LSSVWM can generate more coherent and accurate sequences over extended periods compared to models relying solely on causal attention or even Mamba2 without frame local attention. For instance, on reasoning tasks for the maze dataset, their model maintains better consistency and accuracy over long horizons. Similarly, for retrieval tasks, LSSVWM shows improved ability to recall and utilize information from distant past frames. Crucially, these improvements are achieved while maintaining practical inference speeds, making the models suitable for interactive applications.

The Paper Long-Context State-Space Video World Models is on arXiv

The post Adobe Research Unlocking Long-Term Memory in Video World Models with State-Space Models first appeared on Synced.

🔗 Sumber: syncedreview.com

📌 MAROKO133 Update ai: It Turns Out That Google's AI Is Being Trained by an A

Google is depending on thousands of contractors to train the AI behind its flagship chatbot Gemini.

As The Guardian reports, many of these “AI raters,” tasked with instructing the model and correcting its many mistakes, are facing poor working conditions and are often exposed to extremely disturbing content.

It’s yet again a worrying reminder that despite tech companies attempting to paint their AI models as miraculous, autonomous fountains of knowledge and cognition that could eventually replace human workers, the current reality is the exact opposite: AI relies on the labor of huge numbers of hidden humans to give it the illusion of intelligence.

And it’s not just the current crop of large language models — AI raters across the globe are being tasked to label data for AI-based, self-driving car software, and other related applications.

“AI isn’t magic; it’s a pyramid scheme of human labor,” Distributed AI Research Institute’s Adio Dinika told the Guardian. “These raters are the middle rung: invisible, essential and expendable.”

In the realm of large language models like Google’s Gemini, raters are being tasked with moderating the output of AI, not only ensuring the accuracy of its responses, but also that it doesn’t expose users to inappropriate content.

Rachel Sawyer, one such worker who was contracted as a “generalist rater” for Google last year, told the Guardian that she was “shocked” after being told to work with “distressing content.”

“Not only because I was given no warning and never asked to sign any consent forms during onboarding,” she added, “but because neither the job title or description ever mentioned content moderation.”

According to the newspaper, raters with no special expertise are being asked to verify the accuracy across highly complex domains such as architecture, astrophysics, and even medical guidance.

One rater told the newspaper that she was pressured to work faster, while being tasked with training the AI on sensitive medical topics like chemotherapy treatments for bladder cancer.

“I pictured a person sitting in their car finding out that they have bladder cancer and googling what I’m editing,” she said.

In a statement to the Guardian, Google refuted the idea that raters are responsible for making AI models sound smart. A spokesperson argued that “quality raters” were “employed to provide external feedback on our products,” which “help us measure how well our systems are working, but do not directly impact our algorithms or models.”

Japanese contractor GlobalLogic contracted thousands of raters in the US to train Google’s AI. Their hourly wages of $16 to $21 an hour — dwarfed by the astronomical salaries of AI researchers — tend to still be far higher than their counterparts in Africa and South Africa, per the Guardian‘s sources. But it’s not an easy job, and one that that can leave lasting scars.

“They are people with expertise who are doing a lot of great writing work, who are being paid below what they’re worth to make an AI model that, in my opinion, the world doesn’t need,” one rater told the newspaper.

Many raters are also being pressured to work on tight deadlines and cope with rapidly changing guidelines.

“We had no idea where it was going, how it was being used or to what end,” a different GlobalLogic-contracted rater told the Guardian.

It’s a sobering glimpse behind the scenes, highlighting how much human work goes into tech that’s being sold as a revolutionary way to circumvent human labor. Yet despite the raters’ best efforts, frontier AI models including Google’s Gemini continue to frequently hallucinate, bungling answers to the simplest of prompts.

More on AI: Godfather of AI Says His Creation Is About to Unleash Massive Unemployment

The post It Turns Out That Google's AI Is Being Trained by an Army of Poorly Treated Human Grunts appeared first on Futurism.

🔗 Sumber: futurism.com

🤖 Catatan MAROKO133

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!