📌 MAROKO133 Breaking ai: Adobe Research Unlocking Long-Term Memory in Video World

Video world models, which predict future frames conditioned on actions, hold immense promise for artificial intelligence, enabling agents to plan and reason in dynamic environments. Recent advancements, particularly with video diffusion models, have shown impressive capabilities in generating realistic future sequences. However, a significant bottleneck remains: maintaining long-term memory. Current models struggle to remember events and states from far in the past due to the high computational cost associated with processing extended sequences using traditional attention layers. This limits their ability to perform complex tasks requiring sustained understanding of a scene.

A new paper, “Long-Context State-Space Video World Models” by researchers from Stanford University, Princeton University, and Adobe Research, proposes an innovative solution to this challenge. They introduce a novel architecture that leverages State-Space Models (SSMs) to extend temporal memory without sacrificing computational efficiency.

The core problem lies in the quadratic computational complexity of attention mechanisms with respect to sequence length. As the video context grows, the resources required for attention layers explode, making long-term memory impractical for real-world applications. This means that after a certain number of frames, the model effectively “forgets” earlier events, hindering its performance on tasks that demand long-range coherence or reasoning over extended periods.

The authors’ key insight is to leverage the inherent strengths of State-Space Models (SSMs) for causal sequence modeling. Unlike previous attempts that retrofitted SSMs for non-causal vision tasks, this work fully exploits their advantages in processing sequences efficiently.

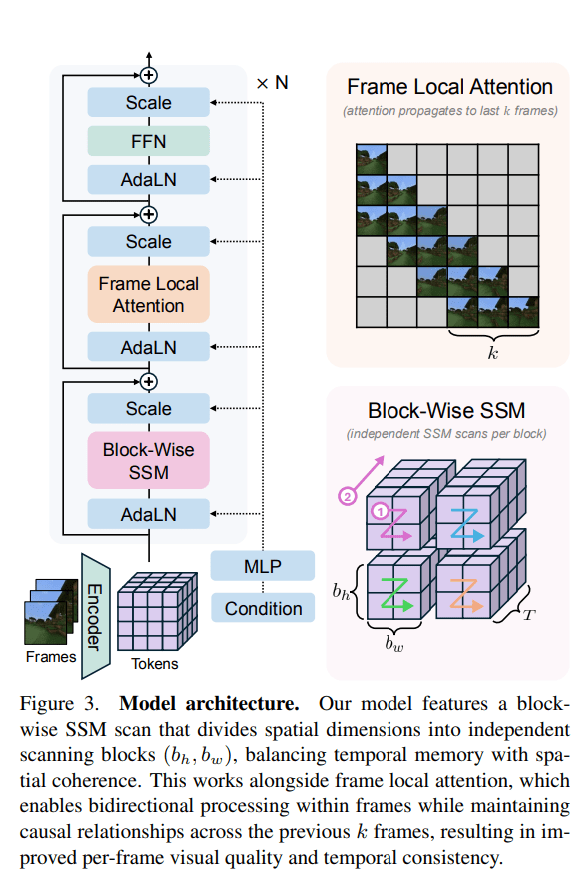

The proposed Long-Context State-Space Video World Model (LSSVWM) incorporates several crucial design choices:

- Block-wise SSM Scanning Scheme: This is central to their design. Instead of processing the entire video sequence with a single SSM scan, they employ a block-wise scheme. This strategically trades off some spatial consistency (within a block) for significantly extended temporal memory. By breaking down the long sequence into manageable blocks, they can maintain a compressed “state” that carries information across blocks, effectively extending the model’s memory horizon.

- Dense Local Attention: To compensate for the potential loss of spatial coherence introduced by the block-wise SSM scanning, the model incorporates dense local attention. This ensures that consecutive frames within and across blocks maintain strong relationships, preserving the fine-grained details and consistency necessary for realistic video generation. This dual approach of global (SSM) and local (attention) processing allows them to achieve both long-term memory and local fidelity.

The paper also introduces two key training strategies to further improve long-context performance:

- Diffusion Forcing: This technique encourages the model to generate frames conditioned on a prefix of the input, effectively forcing it to learn to maintain consistency over longer durations. By sometimes not sampling a prefix and keeping all tokens noised, the training becomes equivalent to diffusion forcing, which is highlighted as a special case of long-context training where the prefix length is zero. This pushes the model to generate coherent sequences even from minimal initial context.

- Frame Local Attention: For faster training and sampling, the authors implemented a “frame local attention” mechanism. This utilizes FlexAttention to achieve significant speedups compared to a fully causal mask. By grouping frames into chunks (e.g., chunks of 5 with a frame window size of 10), frames within a chunk maintain bidirectionality while also attending to frames in the previous chunk. This allows for an effective receptive field while optimizing computational load.

The researchers evaluated their LSSVWM on challenging datasets, including Memory Maze and Minecraft, which are specifically designed to test long-term memory capabilities through spatial retrieval and reasoning tasks.

The experiments demonstrate that their approach substantially surpasses baselines in preserving long-range memory. Qualitative results, as shown in supplementary figures (e.g., S1, S2, S3), illustrate that LSSVWM can generate more coherent and accurate sequences over extended periods compared to models relying solely on causal attention or even Mamba2 without frame local attention. For instance, on reasoning tasks for the maze dataset, their model maintains better consistency and accuracy over long horizons. Similarly, for retrieval tasks, LSSVWM shows improved ability to recall and utilize information from distant past frames. Crucially, these improvements are achieved while maintaining practical inference speeds, making the models suitable for interactive applications.

The Paper Long-Context State-Space Video World Models is on arXiv

The post Adobe Research Unlocking Long-Term Memory in Video World Models with State-Space Models first appeared on Synced.

🔗 Sumber: syncedreview.com

📌 MAROKO133 Hot ai: Solid-state sodium battery with 5,000-hour lifespan retains 91

Researchers at the University of Queensland’s Australian Institute for Bioengineering and Nanotechnology (AIBN) have developed a new solid electrolyte that could contribute to the development of grid-scale energy storage.

The material has been tested in sodium metal batteries (SMBs) and has shown promising performance.

In testing, a battery utilizing the new material operated for over 5,000 hours at 80°C (176°F) and retained over 91% of its capacity after 1,000 charge cycles. This level of performance is relevant for the potential use of SMBs in storing renewable energy.

“This kind of long-term performance is essential for grid-level energy storage,” said AIBN Group Leader Dr. Cheng Zhang.

Addressing a known safety issue

Sodium metal batteries are considered a potential alternative to lithium-ion technology due to the low cost and abundance of sodium. However, their use has been limited by safety concerns.

The primary issue is the battery’s electrolyte, the medium that facilitates the movement of ions. Most conventional batteries use a liquid electrolyte, which is flammable.

“These liquids are flammable and can overheat, causing fires like we’ve seen in electric vehicles and e-scooter batteries,” Dr. Zhang explained.

These fires are often initiated by the formation of dendrites—small, sharp metal spikes that can grow inside the battery, leading to short circuits.

“This kind of growth usually happens when the electrolyte becomes unstable after repeated charge cycles, which makes the battery both unsafe and unreliable,” Dr. Zhang added.

To address this safety issue, Dr. Zhang and PhD student Zhou Chen created a new solid electrolyte, a fluorinated block copolymer called P(Na3-EO7)-PFPE. This solid, plastic-like material is not flammable and is designed to inhibit dendrite growth.

Engineering material’s internal structure

The researchers engineered the material’s internal structure into a body-centered cubic formation. This structure creates microscopic tunnels that allow sodium ions to move through the material efficiently.

“By adjusting the layout to form what’s known as a body-centered cubic structure, we were able to enhance the material’s naturally forming tunnels,” concluded Zhou.

“This allowed sodium ions to move just as smoothly and efficiently as they do in lithium batteries, while also reducing the risk of harmful build-up like dendrites.”

While the test results at high temperatures are a milestone, the team is now focused on the next phase of research. The immediate goal is to achieve similar performance at room temperature.

Steps for commercialization

Researchers across the world have intensified their efforts towards the commercialization of sodium-state batteries.

Recently, in another development, researchers proposed that sodium-based solid-state batteries can finally hold their own at room and even subzero temperatures. The work raises the benchmark for sodium batteries, a technology that has struggled in real-world conditions.

Their paper demonstrates thick sodium cathodes that perform at room temperature and down to freezing.

Earlier, a study by researchers at the Tokyo University of Science had shown that doping a sodium-ion battery cathode with scandium can improve its cycling stability.

While conducting tests, they noticed that a coin-type full cell using the modified cathode retained 60% of its capacity after 300 charge-discharge cycles.’

🔗 Sumber: interestingengineering.com

🤖 Catatan MAROKO133

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!