📌 MAROKO133 Breaking ai: Adobe Research Unlocking Long-Term Memory in Video World

Video world models, which predict future frames conditioned on actions, hold immense promise for artificial intelligence, enabling agents to plan and reason in dynamic environments. Recent advancements, particularly with video diffusion models, have shown impressive capabilities in generating realistic future sequences. However, a significant bottleneck remains: maintaining long-term memory. Current models struggle to remember events and states from far in the past due to the high computational cost associated with processing extended sequences using traditional attention layers. This limits their ability to perform complex tasks requiring sustained understanding of a scene.

A new paper, “Long-Context State-Space Video World Models” by researchers from Stanford University, Princeton University, and Adobe Research, proposes an innovative solution to this challenge. They introduce a novel architecture that leverages State-Space Models (SSMs) to extend temporal memory without sacrificing computational efficiency.

The core problem lies in the quadratic computational complexity of attention mechanisms with respect to sequence length. As the video context grows, the resources required for attention layers explode, making long-term memory impractical for real-world applications. This means that after a certain number of frames, the model effectively “forgets” earlier events, hindering its performance on tasks that demand long-range coherence or reasoning over extended periods.

The authors’ key insight is to leverage the inherent strengths of State-Space Models (SSMs) for causal sequence modeling. Unlike previous attempts that retrofitted SSMs for non-causal vision tasks, this work fully exploits their advantages in processing sequences efficiently.

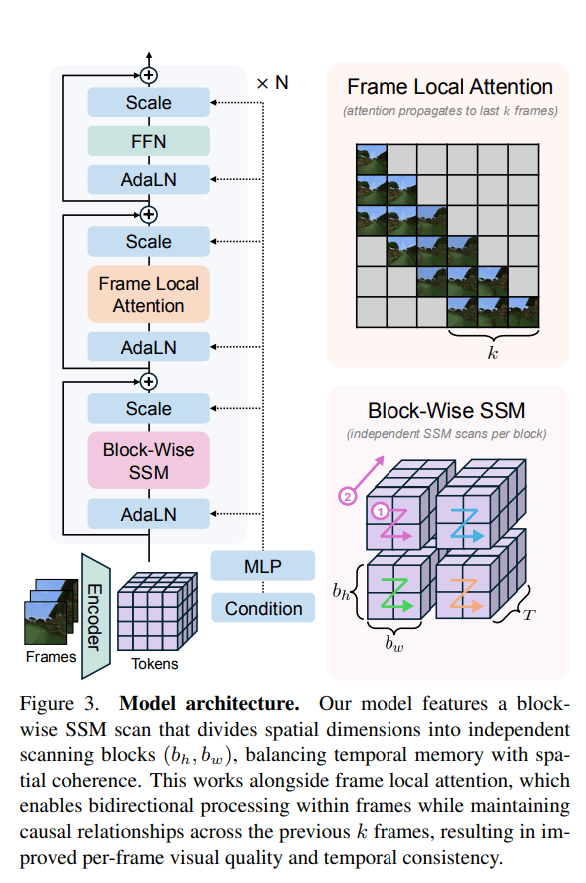

The proposed Long-Context State-Space Video World Model (LSSVWM) incorporates several crucial design choices:

- Block-wise SSM Scanning Scheme: This is central to their design. Instead of processing the entire video sequence with a single SSM scan, they employ a block-wise scheme. This strategically trades off some spatial consistency (within a block) for significantly extended temporal memory. By breaking down the long sequence into manageable blocks, they can maintain a compressed “state” that carries information across blocks, effectively extending the model’s memory horizon.

- Dense Local Attention: To compensate for the potential loss of spatial coherence introduced by the block-wise SSM scanning, the model incorporates dense local attention. This ensures that consecutive frames within and across blocks maintain strong relationships, preserving the fine-grained details and consistency necessary for realistic video generation. This dual approach of global (SSM) and local (attention) processing allows them to achieve both long-term memory and local fidelity.

The paper also introduces two key training strategies to further improve long-context performance:

- Diffusion Forcing: This technique encourages the model to generate frames conditioned on a prefix of the input, effectively forcing it to learn to maintain consistency over longer durations. By sometimes not sampling a prefix and keeping all tokens noised, the training becomes equivalent to diffusion forcing, which is highlighted as a special case of long-context training where the prefix length is zero. This pushes the model to generate coherent sequences even from minimal initial context.

- Frame Local Attention: For faster training and sampling, the authors implemented a “frame local attention” mechanism. This utilizes FlexAttention to achieve significant speedups compared to a fully causal mask. By grouping frames into chunks (e.g., chunks of 5 with a frame window size of 10), frames within a chunk maintain bidirectionality while also attending to frames in the previous chunk. This allows for an effective receptive field while optimizing computational load.

The researchers evaluated their LSSVWM on challenging datasets, including Memory Maze and Minecraft, which are specifically designed to test long-term memory capabilities through spatial retrieval and reasoning tasks.

The experiments demonstrate that their approach substantially surpasses baselines in preserving long-range memory. Qualitative results, as shown in supplementary figures (e.g., S1, S2, S3), illustrate that LSSVWM can generate more coherent and accurate sequences over extended periods compared to models relying solely on causal attention or even Mamba2 without frame local attention. For instance, on reasoning tasks for the maze dataset, their model maintains better consistency and accuracy over long horizons. Similarly, for retrieval tasks, LSSVWM shows improved ability to recall and utilize information from distant past frames. Crucially, these improvements are achieved while maintaining practical inference speeds, making the models suitable for interactive applications.

The Paper Long-Context State-Space Video World Models is on arXiv

The post Adobe Research Unlocking Long-Term Memory in Video World Models with State-Space Models first appeared on Synced.

🔗 Sumber: syncedreview.com

📌 MAROKO133 Hot ai: Mining the Moon’s helium-3: The race fueling quantum dreams an

Humanity has long considered the Moon a place of wonder and possibility. Now it’s being talked about as a potential source for one of humankind’s most unusual and valuable materials: Helium-3. Locked into the fine dust of the lunar surface by billions of years of solar wind, this light, non-radioactive isotope has, for many years, attracted attention from tech firms, space startups, and governments because of its outsized potential.

It can help chill quantum computers to near absolute zero, improve certain medical images and national-security scanners, and, in theory, serve as an almost-clean fusion fuel. Together, those promises turn helium-3 into a decisive strategic commodity and a driver for an emerging moon-mining race. The United States and China emerge as the principal rivals. Both have placed lunar exploration high on their national agendas and explicitly tied it to future technological and strategic advantage.

Russia, too, has declared its intent to join the competition, while the European Union, India, and other smaller players are beginning to take their position in this contest. As the world lines up for a stake in lunar resources, the question remains. Who will get there first, and what will it mean for the future of technology, energy, and geopolitics?

Why helium-3 matters

Helium-3 is rare on Earth. Most of our supply comes indirectly from tritium decay in nuclear stockpiles, producing only modest quantities. Figures cited in industry discussions put annual yields from these sources at a few thousand to tens of thousands of liters, far short of what a fully scaled quantum industry might demand. By contrast, the Moon has quietly accumulated helium-3 for eons because it lacks a protective magnetic field; solar wind particles implant the isotope into the top layers of regolith.

Estimates vary, but some scientists believe the lunar surface could contain enormous absolute quantities, perhaps on the order of a million metric tons, though dispersed at low concentrations.

Why does that matter? For quantum computing, helium-3 is a workhorse behind the scenes. State-of-the-art dilution refrigerators exploit mixtures of helium-3 and helium-4 to cool qubits down to millikelvin temperatures where fragile quantum states can persist. As one cryogenics engineer put it, “It’s like 200 times colder inside a Blue Origin fridge than outer space,” underscoring how essential extreme cold is to reducing errors and making quantum machines useful. If quantum data centers scale up as companies and nations expect, demand for helium-3 could balloon well beyond what Earth can supply.

Beyond quantum, the isotope’s appeal is broader still. Helium-3 is a superb neutron absorber, useful for radiation detectors, and when hyperpolarized, it improves some MRI scans. The most intoxicating possibility, however, is fusion. Fusion reactions that use helium-3 produce charged particles rather than neutrons, meaning far less long-term radioactivity in reactor materials.

Theoretical comparisons are eye-popping. On paper, tens of tons of helium-3 could deliver energy for entire nations for long periods. That futuristic prize is one reason politicians and planners are paying attention, even though workable helium-3 fusion reactors remain, for now, speculative.

Engineering the harvest

Turning lunar dust into a meaningful stream of helium-3 is not simple. The isotope is not concentrated in easy-to-access pipes or pockets. Apollo samples typically showed helium-3 concentrations measured in parts per billion. That means enormous volumes of regolith must be processed to extract useful gas. The basic industrial recipe is straightforward on paper.

Excavate surface soil, heat it at high temperatures to release trapped gases, separate helium-3 from the much more abundant helium-4 and other volatiles, and store the purified gas for transport. In practice, each step poses hard engineering problems.

Marcin Frackiewicz, president of Warsaw’s TS2 SPACE, noted these limitations in his detailed review of the field. Lunar regolith is notoriously hostile to machinery. It’s fine, glassy particles are highly abrasive and cling electrostatically, which in Apollo missions fouled seals and joints and stuck to spacesuits. In vacuum and one-sixth gravity, lubricants boil off, moving parts behave differently, and remote or autonomous operation is necessary because of the time delay between Earth and Moon, which makes real-time teleoperation impractical.

Powering heaters that drive hundreds of tons of soil through thermal processing will require large, reliable energy sources on the surface, whether solar concentrators or small reactors, and any mining concept has to balance mass, power draw, and reliability to be deliverable and maintainable by robotic systems.

Startups are building concepts that try to thread these needles. One company, Interlune, has proposed a small, mobile harvester that scoops regolith, heats it internally, and expels spent soil as it traverses the surface. The goal is a machine light enough to be landed on a single lander but capable of processing tens to hundreds of tons per hour. Interlune’s CEO has been explicit about the scale.

To produce small quantities of helium-3 that matter on Earth, you must process vast swathes of soil, “enough lunar regolith to fill a large backyard swimming pool,” to yield only a few liters. The company has moved from lab experiments into hardware partnerships, tested sub-scale systems in reduced-gravity flights, and worked with terrestrial equipment makers to shrink conventional heavy-civil machinery into space-ready form.

Separation and purification are another critical hurdle. Even if a harvester can outgas regolith reliably, separating minute traces of helium-3 from helium-4 and other gases at low mass fractions requires sophisticated cryogenic or membrane systems. Some teams have demonstrated initial proof-of-concept separations on Earth, but making those systems robust for the lunar environment is a different challenge. Storage and safe transport back to Earth, presuming governments and markets decide that bringing helium-3 home is economical, adds further complexity.

Where we are now

Interest and investment have moved quickly from specu…

Konten dipersingkat otomatis.

🔗 Sumber: interestingengineering.com

🤖 Catatan MAROKO133

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!