📌 MAROKO133 Hot ai: Adobe Research Unlocking Long-Term Memory in Video World Model

Video world models, which predict future frames conditioned on actions, hold immense promise for artificial intelligence, enabling agents to plan and reason in dynamic environments. Recent advancements, particularly with video diffusion models, have shown impressive capabilities in generating realistic future sequences. However, a significant bottleneck remains: maintaining long-term memory. Current models struggle to remember events and states from far in the past due to the high computational cost associated with processing extended sequences using traditional attention layers. This limits their ability to perform complex tasks requiring sustained understanding of a scene.

A new paper, “Long-Context State-Space Video World Models” by researchers from Stanford University, Princeton University, and Adobe Research, proposes an innovative solution to this challenge. They introduce a novel architecture that leverages State-Space Models (SSMs) to extend temporal memory without sacrificing computational efficiency.

The core problem lies in the quadratic computational complexity of attention mechanisms with respect to sequence length. As the video context grows, the resources required for attention layers explode, making long-term memory impractical for real-world applications. This means that after a certain number of frames, the model effectively “forgets” earlier events, hindering its performance on tasks that demand long-range coherence or reasoning over extended periods.

The authors’ key insight is to leverage the inherent strengths of State-Space Models (SSMs) for causal sequence modeling. Unlike previous attempts that retrofitted SSMs for non-causal vision tasks, this work fully exploits their advantages in processing sequences efficiently.

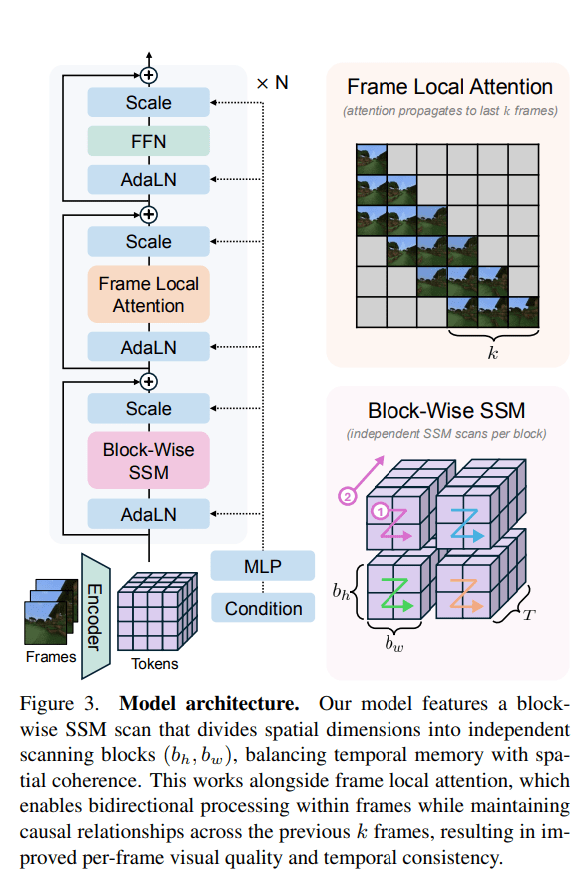

The proposed Long-Context State-Space Video World Model (LSSVWM) incorporates several crucial design choices:

- Block-wise SSM Scanning Scheme: This is central to their design. Instead of processing the entire video sequence with a single SSM scan, they employ a block-wise scheme. This strategically trades off some spatial consistency (within a block) for significantly extended temporal memory. By breaking down the long sequence into manageable blocks, they can maintain a compressed “state” that carries information across blocks, effectively extending the model’s memory horizon.

- Dense Local Attention: To compensate for the potential loss of spatial coherence introduced by the block-wise SSM scanning, the model incorporates dense local attention. This ensures that consecutive frames within and across blocks maintain strong relationships, preserving the fine-grained details and consistency necessary for realistic video generation. This dual approach of global (SSM) and local (attention) processing allows them to achieve both long-term memory and local fidelity.

The paper also introduces two key training strategies to further improve long-context performance:

- Diffusion Forcing: This technique encourages the model to generate frames conditioned on a prefix of the input, effectively forcing it to learn to maintain consistency over longer durations. By sometimes not sampling a prefix and keeping all tokens noised, the training becomes equivalent to diffusion forcing, which is highlighted as a special case of long-context training where the prefix length is zero. This pushes the model to generate coherent sequences even from minimal initial context.

- Frame Local Attention: For faster training and sampling, the authors implemented a “frame local attention” mechanism. This utilizes FlexAttention to achieve significant speedups compared to a fully causal mask. By grouping frames into chunks (e.g., chunks of 5 with a frame window size of 10), frames within a chunk maintain bidirectionality while also attending to frames in the previous chunk. This allows for an effective receptive field while optimizing computational load.

The researchers evaluated their LSSVWM on challenging datasets, including Memory Maze and Minecraft, which are specifically designed to test long-term memory capabilities through spatial retrieval and reasoning tasks.

The experiments demonstrate that their approach substantially surpasses baselines in preserving long-range memory. Qualitative results, as shown in supplementary figures (e.g., S1, S2, S3), illustrate that LSSVWM can generate more coherent and accurate sequences over extended periods compared to models relying solely on causal attention or even Mamba2 without frame local attention. For instance, on reasoning tasks for the maze dataset, their model maintains better consistency and accuracy over long horizons. Similarly, for retrieval tasks, LSSVWM shows improved ability to recall and utilize information from distant past frames. Crucially, these improvements are achieved while maintaining practical inference speeds, making the models suitable for interactive applications.

The Paper Long-Context State-Space Video World Models is on arXiv

The post Adobe Research Unlocking Long-Term Memory in Video World Models with State-Space Models first appeared on Synced.

🔗 Sumber: syncedreview.com

📌 MAROKO133 Eksklusif ai: 2,400-year-old new neighborhood in Rome discovered with

On the eastern outskirts of Rome, near Via Tiburtina, researchers have uncovered an ancient road, two monumental basins, two Republican-era tombs, and a shrine possibly dedicated to Hercules. This discovery once again demonstrates that Rome still holds archaeological treasures waiting to be found.

“It is precisely contexts like this,” said excavation head Daniela Porro, “that show how the unexplored outskirts of Rome contributed to the sprawling city’s development.” In a press release, she described the modern suburbs as “repositories of profound memories” that deserve further investigation.

What is this newly discovered area in Rome? A neighborhood?

As the excavation is ongoing, archaeologists cannot confirm that yet, but they have not found any evidence of residences. However, the wealth of archaeological finds—such as a funerary complex belonging to the elite, basins or pools, and a shrine believed to be dedicated to Hercules—suggests that the area had a sacred purpose. Other nearby temples also honored this famous hero, who is considered the protector of Rome.

The newly uncovered section of Rome demonstrates that the suburbs warrant further investigation to deepen understanding of the role the outskirts played in the city’s growth.

A new corner of Rome discovered

Spearheaded by the Ministry of Culture to preserve Rome’s unparalleled archaeological heritage, archaeologists began their excavations in the summer of 2022 in anticipation of urban development. Daniela Porro emphasized the importance of preventative archaeology to ensure the ethical treatment of the past while building for the future.

Under the guidance of Fabrizio Santi, the team uncovered a sprawling 2.5 acres of land featuring a road that led to a cemetery, a temple, and ceremonial or ritual pools, with artifacts dating from the 5th century BCE to the 1st century AD, and some evidence from the 2nd to 3rd centuries AD.

A sacred complex with leaders laid to rest?

The road led to a small quadrangular sacellus, or religious building, measuring approximately 14.76 by 18.04 feet. Inside, archaeologists discovered fragments of female figurines and two terracotta cattle. Given its location near Via Tiburtina, they believe that the shrine belonged to the cult of Hercules.

To the east and south of the road, two monumental pools or basins were unearthed, measuring 68.90 feet by 30.18 feet and 91.86 feet by 32.81 feet, as per a press release.

Both basins featured access ramps paved with large stone blocks and concrete slabs, as reported by Archaeology News. The southern pool had a notable additional ramp that led to the pool’s bottom. However, the absence of inlet and outlet channels makes it impossible to determine their exact use and function.

Interestingly, another basin recently discovered in Gabii showed structural similarities, leading archaeologists to hypothesize that these pools may have served a sacred purpose. Alternatively, as described by Archaeology News, these pools could indicate some form of water management. Based on ceramics found scattered across the site, archaeologists estimate a date of abandonment around the 2nd century CE.

This exciting new corner of Rome supports an emerging understanding of the eternal city, revealing that its growth was dependent on its suburbs and altering our comprehension of how Rome functioned and thrived.

🔗 Sumber: interestingengineering.com

🤖 Catatan MAROKO133

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!