📌 MAROKO133 Breaking ai: Chinese firm unveils new pyramid-shaped PC that runs AI l

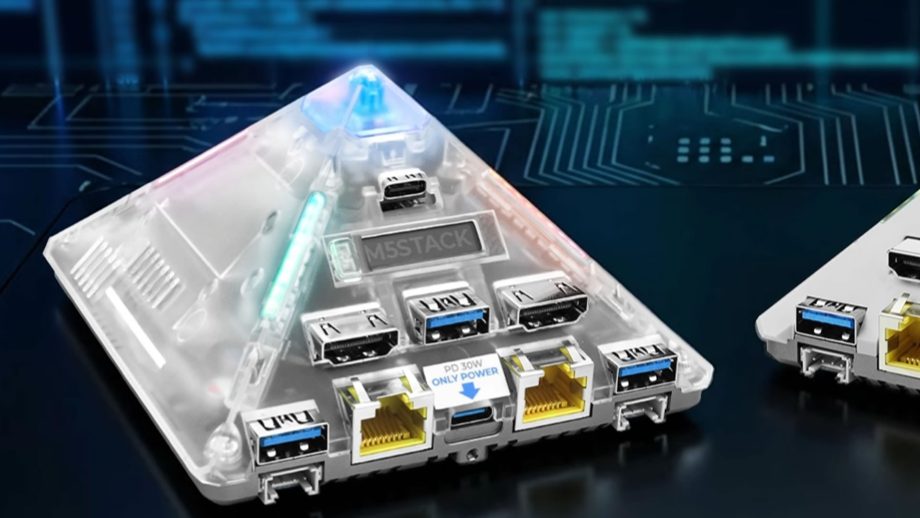

M5Stack has unveiled its 24 TOPS AI Pyramid Pro pyramid-shaped desktop personal computer. Apart from its novel design, this new piece of hardware is specifically designed to run artificial intelligence (AI) locally rather than in the cloud.

To this end, it can be thought of more as an “AI appliance” than a traditional PC. It’s shaped like a pyramid mostly for fun and branding, but inside it’s serious edge-AI hardware.

The device is compact and comes with 8GB RAM, strong video encode/decode, multi-camera support, rich I/O, and low-power operation. It also comes with 32GB of onboard eMMC storage, and offers dual HDMI 2.0 ports, four USB-A 3.0 ports, and two USB Type-C ports.

As for its declared computing power, 24 TOPS is pretty impressive. TOPS stands for “Trillion Operations Per Second,” which means it can perform 24 trillion AI math operations per second.

AI power in a pyramid shape

While that’s not close to gaming-PC power, it’s still very strong for on-device AI, especially at its declared price point of $250. According to reports, this new piece of kit has been designed to specialize in real-time computer vision, speech recognition, and object detection.

It is also optimized to run small to medium LLMs (quantized, not ChatGPT-scale) and multiple camera feeds at once. To condense all this into a small device, M5Stack has dispensed with including a graphical processing unit (GPU) and gone with something called Arm Central processing unit (CPU).

This is very efficient and requires very little power to function. The device also includes a purpose-built neural processor, which is fast for AI but slow for everything else.

This makes the pyramid computer fast for an AI interface, quiet, and relatively low power to run. It is not, however, good enough for gaming, heavy coding apps, or general desktop usage.

One of its standout features is its ability to allegedly decode up to sixteen 1080p video streams. This means it can simultaneously handle multiple cameras at once and analyze them at the same time.

This should mean it can readily perform detection, tracking, and face recognition. That would make it ideal for smart security systems, AI camera hubs, or as an edge gateway for factories or other buildings.

Not for the general consumer

The ability to host an AI locally (rather than on a cloud) also has some interesting benefits. It offers, for example, much improved privacy as video or audio footage captured never leaves its network.

The new computer also provides much lower latency (effectively instant response) and benefits from no per-use fees such as those common to cloud services. It can also work without the need fora constant internet connection.

This should, in theory, make it very attractive for home assistant-type setups, small businesses, and engineers prototyping edge AI products. The device also comes with a special microcontroller that can handle power monitoring, buttons, a status screen, and an RGB LED ring.

Given the very specialist nature of the new pyramid computer, it should come as no surprise that it is not aimed at general release. Rather, its target audience will most likely be Edge-AI developers, embedded engineers, and makers doing serious AI projects.

It should also appeal to anyone who wants Jetson-style capability without Nvidia pricing.

🔗 Sumber: interestingengineering.com

📌 MAROKO133 Update ai: Researchers from PSU and Duke introduce “Multi-Agent System

Share My Research is Synced’s column that welcomes scholars to share their own research breakthroughs with over 2M global AI enthusiasts. Beyond technological advances, Share My Research also calls for interesting stories behind the research and exciting research ideas.

Meet the author

Institutions: Penn State University, Duke University, Google DeepMind, University of Washington, Meta, Nanyang Technological University, and Oregon State University. The co-first authors are Shaokun Zhang of Penn State University and Ming Yin of Duke University.

In recent years, LLM Multi-Agent systems have garnered widespread attention for their collaborative approach to solving complex problems. However, it’s a common scenario for these systems to fail at a task despite a flurry of activity. This leaves developers with a critical question: which agent, at what point, was responsible for the failure? Sifting through vast interaction logs to pinpoint the root cause feels like finding a needle in a haystack—a time-consuming and labor-intensive effort.

This is a familiar frustration for developers. In increasingly complex Multi-Agent systems, failures are not only common but also incredibly difficult to diagnose due to the autonomous nature of agent collaboration and long information chains. Without a way to quickly identify the source of a failure, system iteration and optimization grind to a halt.

To address this challenge, researchers from Penn State University and Duke University, in collaboration with institutions including Google DeepMind, have introduced the novel research problem of “Automated Failure Attribution.” They have constructed the first benchmark dataset for this task, Who&When, and have developed and evaluated several automated attribution methods. This work not only highlights the complexity of the task but also paves a new path toward enhancing the reliability of LLM Multi-Agent systems.

The paper has been accepted as a Spotlight presentation at the top-tier machine learning conference, ICML 2025, and the code and dataset are now fully open-source.

Paper:https://arxiv.org/pdf/2505.00212

Code:https://github.com/mingyin1/Agents_Failure_Attribution

Dataset:https://huggingface.co/datasets/Kevin355/Who_and_When

Research Background and Challenges

LLM-driven Multi-Agent systems have demonstrated immense potential across many domains. However, these systems are fragile; errors by a single agent, misunderstandings between agents, or mistakes in information transmission can lead to the failure of the entire task.

Currently, when a system fails, developers are often left with manual and inefficient methods for debugging:

Manual Log Archaeology : Developers must manually review lengthy interaction logs to find the source of the problem.

Reliance on Expertise : The debugging process is highly dependent on the developer’s deep understanding of the system and the task at hand.

This “needle in a haystack” approach to debugging is not only inefficient but also severely hinders rapid system iteration and the improvement of system reliability. There is an urgent need for an automated, systematic method to pinpoint the cause of failures, effectively bridging the gap between “evaluation results” and “system improvement.”

Core Contributions

This paper makes several groundbreaking contributions to address the challenges above:

1. Defining a New Problem: The paper is the first to formalize “automated failure attribution” as a specific research task. This task is defined by identifying the

2. failure-responsible agent and the decisive error step that led to the task’s failure.

Constructing the First Benchmark Dataset: Who&When : This dataset includes a wide range of failure logs collected from 127 LLM Multi-Agent systems, which were either algorithmically generated or hand-crafted by experts to ensure realism and diversity. Each failure log is accompanied by fine-grained human annotations for:

Who: The agent responsible for the failure.

When: The specific interaction step where the decisive error occurred.

Why: A natural language explanation of the cause of the failure.

3. Exploring Initial “Automated Attribution” Methods : Using the Who&When dataset, the paper designs and assesses three distinct methods for automated failure attribution:

All-at-Once: This method provides the LLM with the user query and the complete failure log, asking it to identify the responsible agent and the decisive error step in a single pass. While cost-effective, it may struggle to pinpoint precise errors in long contexts.

Step-by-Step: This approach mimics manual debugging by having the LLM review the interaction log sequentially, making a judgment at each step until the error is found. It is more precise at locating the error step but incurs higher costs and risks accumulating errors.

Binary Search: A compromise between the first two methods, this strategy repeatedly divides the log in half, using the LLM to determine which segment contains the error. It then recursively searches the identified segment, offering a balance of cost and performance.

Experimental Results and Key Findings

Experiments were conducted in two settings: one where the LLM knows the ground truth answer to the problem the Multi-Agent system is trying to solve (With Ground Truth) and one where it does not (Without Ground Truth). The primary model used was GPT-4o, though other models were also tested. The systematic evaluation of these methods on the Who&When dataset yielded several important insights:

- A Long Way to Go: Current methods are far from perfect. Even the best-performing single method achieved an accuracy of only about 53.5% in identifying the responsible agent and a mere 14.2% in pinpointing the exact error step. Some methods performed even worse than random guessing, underscoring the difficulty of the task.

- No “All-in-One” Solution: Different methods excel at different aspects of the problem. The All-at-Once method is better at identifying “Who,” while the Step-by-Step method is more effective at determining “When.” The Binary Search method provides a middle-ground performance.

- Hybrid Approaches Show Promise but at a High Cost: The researchers found that combining different methods, such as using the All-at-Once approach to identify a potential agent and then applying the Step-by-Step method to find the error, can improve overall performance. However, this comes with a significant increase in computational cost.

- State-of-the-Art Models Struggle: Surprisingly, even the most advanced reasoning m…

Konten dipersingkat otomatis.

🔗 Sumber: syncedreview.com

🤖 Catatan MAROKO133

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!