📌 MAROKO133 Eksklusif ai: Death can’t destroy a black hole: 7D model reveals remna

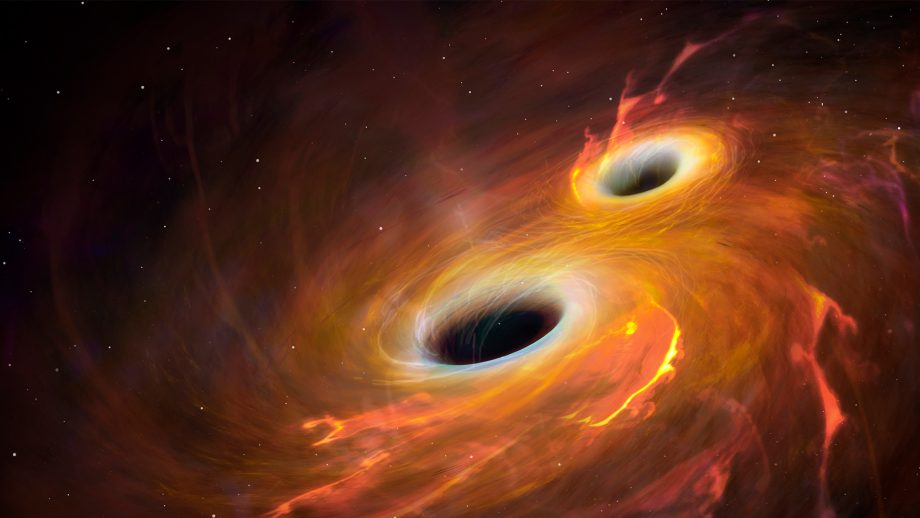

Stephen Hawking showed in the 1970s that black holes are not completely black. They slowly emit radiation and shrink over time, eventually disappearing. However, there was a problem with this explanation.

If a black hole evaporates completely, what happens to all the information about the matter it swallowed? Quantum physics says information can never be destroyed, yet black holes seem to do exactly that. This contradiction is famously known as the black hole information paradox.

“The black hole information paradox represents one of the most significant challenges in modern theoretical physics, raising questions about the compatibility between quantum mechanics and general relativity,” the study authors note.

Now, a new study offers a way out of this problem. It suggests that black holes never fully vanish. Instead, they leave behind tiny, stable remnants that store information—and surprisingly, the same idea may also explain how fundamental particles get their mass.

A twisting spacetime and a force that halts the end of a black hole

To solve this paradox, the researchers moved beyond the usual picture of gravity. In standard general relativity, spacetime can bend under the influence of mass and energy.

However, the theory used in this study, called Einstein–Cartan theory, allows spacetime to do more than just bend—it can also twist. This twisting, known as torsion, becomes important at extremely small scales and very high densities.

The team explored this idea in a universe with seven dimensions, instead of the four we experience. They used a special mathematical structure called a G2-manifold with torsion, which provides a consistent way to describe how these extra dimensions behave. While this sounds abstract, the physical consequence is surprisingly clear.

As matter collapses inside a black hole and densities rise toward the Planck scale, the torsion of spacetime begins to generate a repulsive effect. This force pushes outward, counteracting the inward pull of gravity. “The existence of a repulsive force at Planckian densities dynamically halts the final stage of Hawking evaporation,” the study authors said.

Instead of collapsing indefinitely or evaporating completely through Hawking radiation, the black hole reaches a stable state. “This leads to the formation of a stable remnant with a predicted mass of approximately 9×10⁻⁴¹ kg,” the study authors added.

This changes the fate of black holes entirely. If they do not vanish, then the information they contain does not need to disappear either.

A 7-dimensional memory hidden in black hole remnants

The next question is where the information actually resides. According to the study, it is encoded in the internal structure of the remnant through what physicists call quasi-normal modes. These are the natural patterns of vibration of the object, similar to how a bell rings after being struck.

In this model, those vibrations occur in the torsion field within the remnant’s geometry. Each vibration pattern can carry quantum information, effectively turning the remnant into a storage system.

The main idea here is that all the information that fell into the original black hole becomes trapped and encoded in these long-lived oscillations. The scale of this storage is also enormous. For instance, a remnant formed from a black hole with the mass of our Sun could store about 1.515 × 10⁷⁷ qubits of information.

This matches the amount needed to preserve everything that would otherwise be lost during evaporation, “preventing the complete disappearance of the black hole, and thus resolving the paradox without violating fundamental principles of physics,” the study authors said.

These findings are eye-opening as they connect black hole physics to particle physics. When the researchers reduced their seven-dimensional model down to four dimensions—the universe we observe—they found that the same torsion field naturally produces an energy scale of about 246 GeV.

This is the exact scale associated with the Higgs field, which is responsible for giving mass to fundamental particles. In simple terms, the same geometric feature that prevents black holes from disappearing also explains why particles have mass.

A theory beyond experiments—for now, but not beyond reach

One reason extra dimensions have not been observed is that the energy required to probe them is far beyond current technology.

For instance, the study predicts that particles linked to these dimensions would have masses around 8.6 × 10¹⁵ GeV, which is millions of times higher than what the Large Hadron Collider can achieve. This makes direct detection impossible for now.

However, the theory is not beyond testing. The gravitational effects of the tiny black hole remnants it describes might be detectable in astrophysical observations. Future work will likely focus on refining the model and searching for these signals.

For now, the study offers a compelling possibility that black holes may not be the end of information after all. Instead, they could be nature’s most fascinating storage devices, secretly preserving the history of everything that has ever fallen into them.

The study is published in the journal General Relativity and Gravitation.

🔗 Sumber: interestingengineering.com

📌 MAROKO133 Update ai: Which Agent Causes Task Failures and When?Researchers from

Share My Research is Synced’s column that welcomes scholars to share their own research breakthroughs with over 1.5M global AI enthusiasts. Beyond technological advances, Share My Research also calls for interesting stories behind the research and exciting research ideas. Contact us: [email protected]

Meet the authors

Institutions: Penn State University, Duke University, Google DeepMind, University of Washington, Meta, Nanyang Technological University, and Oregon State University. The co-first authors are Shaokun Zhang of Penn State University and Ming Yin of Duke University.

In recent years, LLM Multi-Agent systems have garnered widespread attention for their collaborative approach to solving complex problems. However, it’s a common scenario for these systems to fail at a task despite a flurry of activity. This leaves developers with a critical question: which agent, at what point, was responsible for the failure? Sifting through vast interaction logs to pinpoint the root cause feels like finding a needle in a haystack—a time-consuming and labor-intensive effort.

This is a familiar frustration for developers. In increasingly complex Multi-Agent systems, failures are not only common but also incredibly difficult to diagnose due to the autonomous nature of agent collaboration and long information chains. Without a way to quickly identify the source of a failure, system iteration and optimization grind to a halt.

To address this challenge, researchers from Penn State University and Duke University, in collaboration with institutions including Google DeepMind, have introduced the novel research problem of “Automated Failure Attribution.” They have constructed the first benchmark dataset for this task, Who&When, and have developed and evaluated several automated attribution methods. This work not only highlights the complexity of the task but also paves a new path toward enhancing the reliability of LLM Multi-Agent systems.

The paper has been accepted as a Spotlight presentation at the top-tier machine learning conference, ICML 2025, and the code and dataset are now fully open-source.

Paper:https://arxiv.org/pdf/2505.00212

Code:https://github.com/mingyin1/Agents_Failure_Attribution

Dataset:https://huggingface.co/datasets/Kevin355/Who_and_When

Research Background and Challenges

LLM-driven Multi-Agent systems have demonstrated immense potential across many domains. However, these systems are fragile; errors by a single agent, misunderstandings between agents, or mistakes in information transmission can lead to the failure of the entire task.

Currently, when a system fails, developers are often left with manual and inefficient methods for debugging:

Manual Log Archaeology : Developers must manually review lengthy interaction logs to find the source of the problem.

Reliance on Expertise : The debugging process is highly dependent on the developer’s deep understanding of the system and the task at hand.

This “needle in a haystack” approach to debugging is not only inefficient but also severely hinders rapid system iteration and the improvement of system reliability. There is an urgent need for an automated, systematic method to pinpoint the cause of failures, effectively bridging the gap between “evaluation results” and “system improvement.”

Core Contributions

This paper makes several groundbreaking contributions to address the challenges above:

1. Defining a New Problem: The paper is the first to formalize “automated failure attribution” as a specific research task. This task is defined by identifying the failure-responsible agent and the decisive error step that led to the task’s failure.

2. Constructing the First Benchmark Dataset: Who&When : This dataset includes a wide range of failure logs collected from 127 LLM Multi-Agent systems, which were either algorithmically generated or hand-crafted by experts to ensure realism and diversity. Each failure log is accompanied by fine-grained human annotations for:

Who: The agent responsible for the failure.

When: The specific interaction step where the decisive error occurred.

Why: A natural language explanation of the cause of the failure.

3. Exploring Initial “Automated Attribution” Methods : Using the Who&When dataset, the paper designs and assesses three distinct methods for automated failure attribution:

– All-at-Once: This method provides the LLM with the user query and the complete failure log, asking it to identify the responsible agent and the decisive error step in a single pass. While cost-effective, it may struggle to pinpoint precise errors in long contexts.

– Step-by-Step: This approach mimics manual debugging by having the LLM review the interaction log sequentially, making a judgment at each step until the error is found. It is more precise at locating the error step but incurs higher costs and risks accumulating errors.

– Binary Search: A compromise between the first two methods, this strategy repeatedly divides the log in half, using the LLM to determine which segment contains the error. It then recursively searches the identified segment, offering a balance of cost and performance.

Experimental Results and Key Findings

Experiments were conducted in two settings: one where the LLM knows the ground truth answer to the problem the Multi-Agent system is trying to solve (With Ground Truth) and one where it does not (Without Ground Truth). The primary model used was GPT-4o, though other models were also tested. The systematic evaluation of these methods on the Who&When dataset yielded several important insights:

– A Long Way to Go: Current methods are far from perfect. Even the best-performing single method achieved an accuracy of only about 53.5% in identifying the responsible agent and a mere 14.2% in pinpointing the exact error step. Some methods performed even worse than random guessing, underscoring the difficulty of the task.

– No “All-in-One” Solution: Different methods excel at different aspects of the problem. The All-at-Once method is better at identifying “Who,” while the Step-by-Step method is more effective at determining “When.” The Binary Search method provides a middle-ground performance.

– Hybrid Approaches Show Promise but at a High Cost: The researchers found that combining different methods, such as using the All-at-Once approach to identify a potential agent and then applying the Step-by-Step method to find the error, can improve overall performance. However, this comes with a significant increase in computational cost.

– State-of-the-Art Models Struggle: Surprisingly, even the most advanced reasoning models, like OpenAI o1 and DeepSeek R1, find this task challenging.- This h…

Konten dipersingkat otomatis.

🔗 Sumber: syncedreview.com

🤖 Catatan MAROKO133

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!