📌 MAROKO133 Hot ai: Adobe Research Unlocking Long-Term Memory in Video World Model

Video world models, which predict future frames conditioned on actions, hold immense promise for artificial intelligence, enabling agents to plan and reason in dynamic environments. Recent advancements, particularly with video diffusion models, have shown impressive capabilities in generating realistic future sequences. However, a significant bottleneck remains: maintaining long-term memory. Current models struggle to remember events and states from far in the past due to the high computational cost associated with processing extended sequences using traditional attention layers. This limits their ability to perform complex tasks requiring sustained understanding of a scene.

A new paper, “Long-Context State-Space Video World Models” by researchers from Stanford University, Princeton University, and Adobe Research, proposes an innovative solution to this challenge. They introduce a novel architecture that leverages State-Space Models (SSMs) to extend temporal memory without sacrificing computational efficiency.

The core problem lies in the quadratic computational complexity of attention mechanisms with respect to sequence length. As the video context grows, the resources required for attention layers explode, making long-term memory impractical for real-world applications. This means that after a certain number of frames, the model effectively “forgets” earlier events, hindering its performance on tasks that demand long-range coherence or reasoning over extended periods.

The authors’ key insight is to leverage the inherent strengths of State-Space Models (SSMs) for causal sequence modeling. Unlike previous attempts that retrofitted SSMs for non-causal vision tasks, this work fully exploits their advantages in processing sequences efficiently.

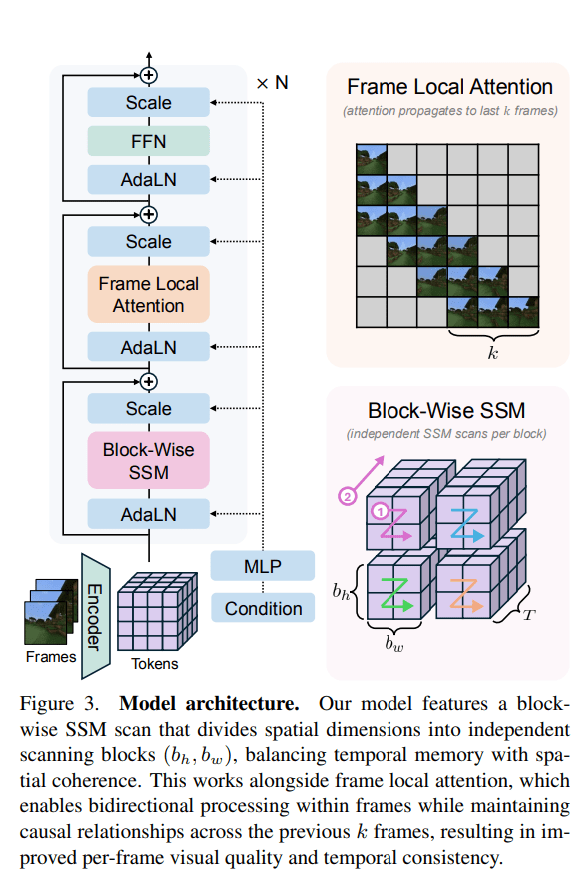

The proposed Long-Context State-Space Video World Model (LSSVWM) incorporates several crucial design choices:

- Block-wise SSM Scanning Scheme: This is central to their design. Instead of processing the entire video sequence with a single SSM scan, they employ a block-wise scheme. This strategically trades off some spatial consistency (within a block) for significantly extended temporal memory. By breaking down the long sequence into manageable blocks, they can maintain a compressed “state” that carries information across blocks, effectively extending the model’s memory horizon.

- Dense Local Attention: To compensate for the potential loss of spatial coherence introduced by the block-wise SSM scanning, the model incorporates dense local attention. This ensures that consecutive frames within and across blocks maintain strong relationships, preserving the fine-grained details and consistency necessary for realistic video generation. This dual approach of global (SSM) and local (attention) processing allows them to achieve both long-term memory and local fidelity.

The paper also introduces two key training strategies to further improve long-context performance:

- Diffusion Forcing: This technique encourages the model to generate frames conditioned on a prefix of the input, effectively forcing it to learn to maintain consistency over longer durations. By sometimes not sampling a prefix and keeping all tokens noised, the training becomes equivalent to diffusion forcing, which is highlighted as a special case of long-context training where the prefix length is zero. This pushes the model to generate coherent sequences even from minimal initial context.

- Frame Local Attention: For faster training and sampling, the authors implemented a “frame local attention” mechanism. This utilizes FlexAttention to achieve significant speedups compared to a fully causal mask. By grouping frames into chunks (e.g., chunks of 5 with a frame window size of 10), frames within a chunk maintain bidirectionality while also attending to frames in the previous chunk. This allows for an effective receptive field while optimizing computational load.

The researchers evaluated their LSSVWM on challenging datasets, including Memory Maze and Minecraft, which are specifically designed to test long-term memory capabilities through spatial retrieval and reasoning tasks.

The experiments demonstrate that their approach substantially surpasses baselines in preserving long-range memory. Qualitative results, as shown in supplementary figures (e.g., S1, S2, S3), illustrate that LSSVWM can generate more coherent and accurate sequences over extended periods compared to models relying solely on causal attention or even Mamba2 without frame local attention. For instance, on reasoning tasks for the maze dataset, their model maintains better consistency and accuracy over long horizons. Similarly, for retrieval tasks, LSSVWM shows improved ability to recall and utilize information from distant past frames. Crucially, these improvements are achieved while maintaining practical inference speeds, making the models suitable for interactive applications.

The Paper Long-Context State-Space Video World Models is on arXiv

The post Adobe Research Unlocking Long-Term Memory in Video World Models with State-Space Models first appeared on Synced.

🔗 Sumber: syncedreview.com

📌 MAROKO133 Eksklusif ai: US and Israel launch major strikes on Iran over nuclear

In a video message released on social media early Saturday, President Donald Trump announced that the US had initiated what he described as major combat operations against Iran, framing the move as a preemptive effort to neutralize escalating security risks.

He argued that Tehran was advancing long-range missile capabilities capable of threatening European allies, US forces stationed abroad, and potentially the American mainland. According to Trump, the objective of the strikes is to defend the American people by eliminating what he called imminent threats posed by the Iranian regime.

He further pledged to dismantle Iran’s nuclear and military infrastructure and reiterated that the US will ensure Iran never acquires a nuclear weapon.

Explosions reported in Tehran and other Iranian cities

Reports of explosions erupted across several Iranian cities, including the capital, Tehran, following coordinated strikes by Israel and the United States early Saturday. According to two sources, the US military is preparing for operations that could continue for several days.

Trump characterized the campaign as massive and ongoing, warning that American lives could be at risk in the process. He framed the strikes as a necessary measure to counter the threats posed by Iran’s missile and nuclear programs, emphasizing that the military action aims to protect both US personnel abroad and the homeland from potential attacks.

“For 47 years, the Iranian regime has chanted ‘Death to America’ and waged an unending campaign of bloodshed and mass murder targeting the United States, our troops and the innocent people in many, many countries,” Trump said in his address.

Trump also outlined a series of complaints against Iran, citing its support for regional proxy groups that have threatened US forces and commercial shipping, as well as its backing of Hamas. He framed these actions as part of a broader pattern of hostile behavior that, in his view, justified the military strikes and underscored the need to hold Tehran accountable for regional destabilization.

Israel braces as Iran fires dozens of missiles in retaliation

Israeli military officials said the initial phase of the operation could extend over several days, with the first strikes carried out during daylight hours to catch Iran by surprise. In response, Iran reportedly launched dozens of ballistic missiles toward Israel, according to Nour News, an outlet affiliated with the Islamic Revolutionary Guard Corps.

Iranian authorities warned that a retaliatory response would be crushing. Sirens sounded across Israel as a precautionary measure, alerting the public to potential missile attacks, the IDF confirmed. US Ambassador to Israel Mike Huckabee urged Americans in the country to stay near shelters and be ready for alerts, advising immediate action at the sound of sirens.

Israeli Prime Minister Benjamin Netanyahu addressed the nation in a statement, calling on the Iranian people to rise against what he described as tyranny. He specifically mentioned Persians, Kurds, Azeris, Baluchis, and Ahwazis, urging them to pursue a free and peaceful Iran. Netanyahu also appealed to Israeli citizens to follow the guidance of the Home Front Command, emphasizing the need for vigilance and preparedness.

He framed the coming days under Operation “The Roar of the Lion” as a period requiring endurance and fortitude from the population, signaling the seriousness and potential duration of the ongoing military campaign.

🔗 Sumber: interestingengineering.com

🤖 Catatan MAROKO133

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!