📌 MAROKO133 Hot ai: Adobe Research Unlocking Long-Term Memory in Video World Model

Video world models, which predict future frames conditioned on actions, hold immense promise for artificial intelligence, enabling agents to plan and reason in dynamic environments. Recent advancements, particularly with video diffusion models, have shown impressive capabilities in generating realistic future sequences. However, a significant bottleneck remains: maintaining long-term memory. Current models struggle to remember events and states from far in the past due to the high computational cost associated with processing extended sequences using traditional attention layers. This limits their ability to perform complex tasks requiring sustained understanding of a scene.

A new paper, “Long-Context State-Space Video World Models” by researchers from Stanford University, Princeton University, and Adobe Research, proposes an innovative solution to this challenge. They introduce a novel architecture that leverages State-Space Models (SSMs) to extend temporal memory without sacrificing computational efficiency.

The core problem lies in the quadratic computational complexity of attention mechanisms with respect to sequence length. As the video context grows, the resources required for attention layers explode, making long-term memory impractical for real-world applications. This means that after a certain number of frames, the model effectively “forgets” earlier events, hindering its performance on tasks that demand long-range coherence or reasoning over extended periods.

The authors’ key insight is to leverage the inherent strengths of State-Space Models (SSMs) for causal sequence modeling. Unlike previous attempts that retrofitted SSMs for non-causal vision tasks, this work fully exploits their advantages in processing sequences efficiently.

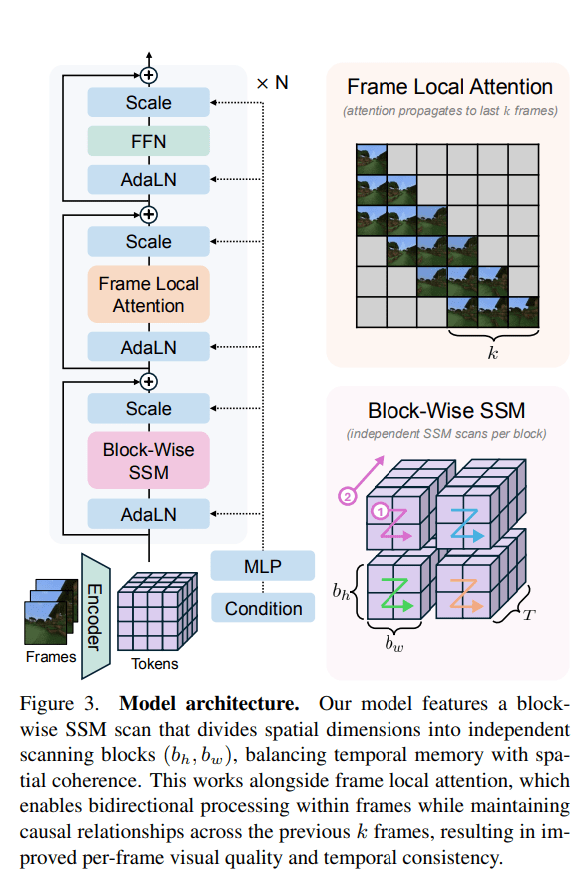

The proposed Long-Context State-Space Video World Model (LSSVWM) incorporates several crucial design choices:

- Block-wise SSM Scanning Scheme: This is central to their design. Instead of processing the entire video sequence with a single SSM scan, they employ a block-wise scheme. This strategically trades off some spatial consistency (within a block) for significantly extended temporal memory. By breaking down the long sequence into manageable blocks, they can maintain a compressed “state” that carries information across blocks, effectively extending the model’s memory horizon.

- Dense Local Attention: To compensate for the potential loss of spatial coherence introduced by the block-wise SSM scanning, the model incorporates dense local attention. This ensures that consecutive frames within and across blocks maintain strong relationships, preserving the fine-grained details and consistency necessary for realistic video generation. This dual approach of global (SSM) and local (attention) processing allows them to achieve both long-term memory and local fidelity.

The paper also introduces two key training strategies to further improve long-context performance:

- Diffusion Forcing: This technique encourages the model to generate frames conditioned on a prefix of the input, effectively forcing it to learn to maintain consistency over longer durations. By sometimes not sampling a prefix and keeping all tokens noised, the training becomes equivalent to diffusion forcing, which is highlighted as a special case of long-context training where the prefix length is zero. This pushes the model to generate coherent sequences even from minimal initial context.

- Frame Local Attention: For faster training and sampling, the authors implemented a “frame local attention” mechanism. This utilizes FlexAttention to achieve significant speedups compared to a fully causal mask. By grouping frames into chunks (e.g., chunks of 5 with a frame window size of 10), frames within a chunk maintain bidirectionality while also attending to frames in the previous chunk. This allows for an effective receptive field while optimizing computational load.

The researchers evaluated their LSSVWM on challenging datasets, including Memory Maze and Minecraft, which are specifically designed to test long-term memory capabilities through spatial retrieval and reasoning tasks.

The experiments demonstrate that their approach substantially surpasses baselines in preserving long-range memory. Qualitative results, as shown in supplementary figures (e.g., S1, S2, S3), illustrate that LSSVWM can generate more coherent and accurate sequences over extended periods compared to models relying solely on causal attention or even Mamba2 without frame local attention. For instance, on reasoning tasks for the maze dataset, their model maintains better consistency and accuracy over long horizons. Similarly, for retrieval tasks, LSSVWM shows improved ability to recall and utilize information from distant past frames. Crucially, these improvements are achieved while maintaining practical inference speeds, making the models suitable for interactive applications.

The Paper Long-Context State-Space Video World Models is on arXiv

The post Adobe Research Unlocking Long-Term Memory in Video World Models with State-Space Models first appeared on Synced.

🔗 Sumber: syncedreview.com

📌 MAROKO133 Breaking ai: MIT Researchers Unveil “SEAL”: A New Step Towards Self-Im

The concept of AI self-improvement has been a hot topic in recent research circles, with a flurry of papers emerging and prominent figures like OpenAI CEO Sam Altman weighing in on the future of self-evolving intelligent systems. Now, a new paper from MIT, titled “Self-Adapting Language Models,” introduces SEAL (Self-Adapting LLMs), a novel framework that allows large language models (LLMs) to update their own weights. This development is seen as another significant step towards the realization of truly self-evolving AI.

The research paper, published yesterday, has already ignited considerable discussion, including on Hacker News. SEAL proposes a method where an LLM can generate its own training data through “self-editing” and subsequently update its weights based on new inputs. Crucially, this self-editing process is learned via reinforcement learning, with the reward mechanism tied to the updated model’s downstream performance.

The timing of this paper is particularly notable given the recent surge in interest surrounding AI self-evolution. Earlier this month, several other research efforts garnered attention, including Sakana AI and the University of British Columbia’s “Darwin-Gödel Machine (DGM),” CMU’s “Self-Rewarding Training (SRT),” Shanghai Jiao Tong University’s “MM-UPT” framework for continuous self-improvement in multimodal large models, and the “UI-Genie” self-improvement framework from The Chinese University of Hong Kong in collaboration with vivo.

Adding to the buzz, OpenAI CEO Sam Altman recently shared his vision of a future with self-improving AI and robots in his blog post, “The Gentle Singularity.” He posited that while the initial millions of humanoid robots would need traditional manufacturing, they would then be able to “operate the entire supply chain to build more robots, which can in turn build more chip fabrication facilities, data centers, and so on.” This was quickly followed by a tweet from @VraserX, claiming an OpenAI insider revealed the company was already running recursively self-improving AI internally, a claim that sparked widespread debate about its veracity.

Regardless of the specifics of internal OpenAI developments, the MIT paper on SEAL provides concrete evidence of AI’s progression towards self-evolution.

Understanding SEAL: Self-Adapting Language Models

The core idea behind SEAL is to enable language models to improve themselves when encountering new data by generating their own synthetic data and optimizing their parameters through self-editing. The model’s training objective is to directly generate these self-edits (SEs) using data provided within the model’s context.

The generation of these self-edits is learned through reinforcement learning. The model is rewarded when the generated self-edits, once applied, lead to improved performance on the target task. Therefore, SEAL can be conceptualized as an algorithm with two nested loops: an outer reinforcement learning (RL) loop that optimizes the generation of self-edits, and an inner update loop that uses the generated self-edits to update the model via gradient descent.

This method can be viewed as an instance of meta-learning, where the focus is on how to generate effective self-edits in a meta-learning fashion.

A General Framework

SEAL operates on a single task instance (C,τ), where C is context information relevant to the task, and τ defines the downstream evaluation for assessing the model’s adaptation. For example, in a knowledge integration task, C might be a passage to be integrated into the model’s internal knowledge, and τ a set of questions about that passage.

Given C, the model generates a self-edit SE, which then updates its parameters through supervised fine-tuning: θ′←SFT(θ,SE). Reinforcement learning is used to optimize this self-edit generation: the model performs an action (generates SE), receives a reward r based on LMθ′’s performance on τ, and updates its policy to maximize the expected reward.

The researchers found that traditional online policy methods like GRPO and PPO led to unstable training. They ultimately opted for ReST^EM, a simpler, filtering-based behavioral cloning approach from a DeepMind paper. This method can be viewed as an Expectation-Maximization (EM) process, where the E-step samples candidate outputs from the current model policy, and the M-step reinforces only those samples that yield a positive reward through supervised fine-tuning.

The paper also notes that while the current implementation uses a single model to generate and learn from self-edits, these roles could be separated in a “teacher-student” setup.

Instantiating SEAL in Specific Domains

The MIT team instantiated SEAL in two specific domains: knowledge integration and few-shot learning.

- Knowledge Integration: The goal here is to effectively integrate information from articles into the model’s weights.

- Few-Shot Learning: This involves the model adapting to new tasks with very few examples.

Experimental Results

The experimental results for both few-shot learning and knowledge integration demonstrate the effectiveness of the SEAL framework.

In few-shot learning, using a Llama-3.2-1B-Instruct model, SEAL significantly improved adaptation success rates, achieving 72.5% compared to 20% for models using basic self-edits without RL training, and 0% without adaptation. While still below “Oracle TTT” (an idealized baseline), this indicates substantial progress.

For knowledge integration, using a larger Qwen2.5-7B model to integrate new facts from SQuAD articles, SEAL consistently outperformed baseline methods. Training with synthetically generated data from the base Qwen-2.5-7B model already showed notable improvements, and subsequent reinforcement learning further boosted performance. The accuracy also showed rapid improvement over external RL iterations, often surpassing setups using GPT-4.1 generated data within just two iterations.

Qualitative examples from the paper illustrate how reinforcement learning leads to the generation of more detailed self-edits, resulting in improved performance.

While promising, the researchers also acknowledge some limitations of the SEAL framework, including aspects related to catastrophic forgetting, computational overhead, and context-dependent evaluation. These are discussed in detail in the original paper.

Original Paper: https://arxiv.org/pdf/2506.10943

Project Site: https://jyopari.github.io/posts/seal

Github Repo: https://github.com/Continual-Intelligence/SEAL

The post MIT Researchers Unveil “SEAL”: A New Step Towards Self-Improving AI first appeared on Synced.

🔗 Sumber: syncedreview.com

🤖 Catatan MAROKO133

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!