📌 MAROKO133 Update ai: Adobe Research Unlocking Long-Term Memory in Video World Mo

Video world models, which predict future frames conditioned on actions, hold immense promise for artificial intelligence, enabling agents to plan and reason in dynamic environments. Recent advancements, particularly with video diffusion models, have shown impressive capabilities in generating realistic future sequences. However, a significant bottleneck remains: maintaining long-term memory. Current models struggle to remember events and states from far in the past due to the high computational cost associated with processing extended sequences using traditional attention layers. This limits their ability to perform complex tasks requiring sustained understanding of a scene.

A new paper, “Long-Context State-Space Video World Models” by researchers from Stanford University, Princeton University, and Adobe Research, proposes an innovative solution to this challenge. They introduce a novel architecture that leverages State-Space Models (SSMs) to extend temporal memory without sacrificing computational efficiency.

The core problem lies in the quadratic computational complexity of attention mechanisms with respect to sequence length. As the video context grows, the resources required for attention layers explode, making long-term memory impractical for real-world applications. This means that after a certain number of frames, the model effectively “forgets” earlier events, hindering its performance on tasks that demand long-range coherence or reasoning over extended periods.

The authors’ key insight is to leverage the inherent strengths of State-Space Models (SSMs) for causal sequence modeling. Unlike previous attempts that retrofitted SSMs for non-causal vision tasks, this work fully exploits their advantages in processing sequences efficiently.

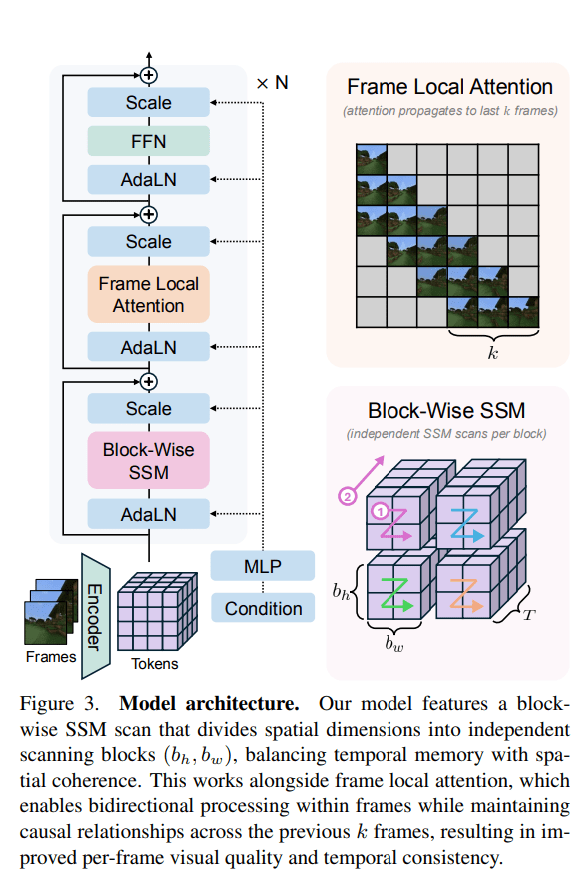

The proposed Long-Context State-Space Video World Model (LSSVWM) incorporates several crucial design choices:

- Block-wise SSM Scanning Scheme: This is central to their design. Instead of processing the entire video sequence with a single SSM scan, they employ a block-wise scheme. This strategically trades off some spatial consistency (within a block) for significantly extended temporal memory. By breaking down the long sequence into manageable blocks, they can maintain a compressed “state” that carries information across blocks, effectively extending the model’s memory horizon.

- Dense Local Attention: To compensate for the potential loss of spatial coherence introduced by the block-wise SSM scanning, the model incorporates dense local attention. This ensures that consecutive frames within and across blocks maintain strong relationships, preserving the fine-grained details and consistency necessary for realistic video generation. This dual approach of global (SSM) and local (attention) processing allows them to achieve both long-term memory and local fidelity.

The paper also introduces two key training strategies to further improve long-context performance:

- Diffusion Forcing: This technique encourages the model to generate frames conditioned on a prefix of the input, effectively forcing it to learn to maintain consistency over longer durations. By sometimes not sampling a prefix and keeping all tokens noised, the training becomes equivalent to diffusion forcing, which is highlighted as a special case of long-context training where the prefix length is zero. This pushes the model to generate coherent sequences even from minimal initial context.

- Frame Local Attention: For faster training and sampling, the authors implemented a “frame local attention” mechanism. This utilizes FlexAttention to achieve significant speedups compared to a fully causal mask. By grouping frames into chunks (e.g., chunks of 5 with a frame window size of 10), frames within a chunk maintain bidirectionality while also attending to frames in the previous chunk. This allows for an effective receptive field while optimizing computational load.

The researchers evaluated their LSSVWM on challenging datasets, including Memory Maze and Minecraft, which are specifically designed to test long-term memory capabilities through spatial retrieval and reasoning tasks.

The experiments demonstrate that their approach substantially surpasses baselines in preserving long-range memory. Qualitative results, as shown in supplementary figures (e.g., S1, S2, S3), illustrate that LSSVWM can generate more coherent and accurate sequences over extended periods compared to models relying solely on causal attention or even Mamba2 without frame local attention. For instance, on reasoning tasks for the maze dataset, their model maintains better consistency and accuracy over long horizons. Similarly, for retrieval tasks, LSSVWM shows improved ability to recall and utilize information from distant past frames. Crucially, these improvements are achieved while maintaining practical inference speeds, making the models suitable for interactive applications.

The Paper Long-Context State-Space Video World Models is on arXiv

The post Adobe Research Unlocking Long-Term Memory in Video World Models with State-Space Models first appeared on Synced.

🔗 Sumber: syncedreview.com

📌 MAROKO133 Hot ai: What to be thankful for in AI in 2025 Hari Ini

Hello, dear readers. Happy belated Thanksgiving and Black Friday!

This year has felt like living inside a permanent DevDay. Every week, some lab drops a new model, a new agent framework, or a new “this changes everything” demo. It’s overwhelming. But it’s also the first year I’ve felt like AI is finally diversifying — not just one or two frontier models in the cloud, but a whole ecosystem: open and closed, giant and tiny, Western and Chinese, cloud and local.

So for this Thanksgiving edition, here’s what I’m genuinely thankful for in AI in 2025 — the releases that feel like they’ll matter in 12–24 months, not just during this week’s hype cycle.

1. OpenAI kept shipping strong: GPT-5, GPT-5.1, Atlas, Sora 2 and open weights

As the company that undeniably birthed the "generative AI" era with its viral hit product ChatGPT in late 2022, OpenAI arguably had among the hardest tasks of any AI company in 2025: continue its growth trajectory even as well-funded competitors like Google with its Gemini models and other startups like Anthropic fielded their own highly competitive offerings.

Thankfully, OpenAI rose to the challenge and then some. Its headline act was GPT-5, unveiled in August as the next frontier reasoning model, followed in November by GPT-5.1 with new Instant and Thinking variants that dynamically adjust how much “thinking time” they spend per task.

In practice, GPT-5’s launch was bumpy — VentureBeat documented early math and coding failures and a cooler-than-expected community reaction in “OpenAI’s GPT-5 rollout is not going smoothly," but it quickly course corrected based on user feedback and, as a daily user of this model, I'm personally pleased with it and impressed with it.

At the same time, enterprises actually using the models are reporting solid gains. ZenDesk Global, for example, says GPT-5-powered agents now resolve more than half of customer tickets, with some customers seeing 80–90% resolution rates. That’s the quiet story: these models may not always impress the chattering classes on X, but they’re starting to move real KPIs.

On the tooling side, OpenAI finally gave developers a serious AI engineer with GPT-5.1-Codex-Max, a new coding model that can run long, agentic workflows and is already the default in OpenAI’s Codex environment. VentureBeat covered it in detail in “OpenAI debuts GPT-5.1-Codex-Max coding model and it already completed a 24-hour task internally.”

Then there’s ChatGPT Atlas, a full browser with ChatGPT baked into the chrome itself — sidebar summaries, on-page analysis, and search tightly integrated into regular browsing. It’s the clearest sign yet that “assistant” and “browser” are on a collision course.

On the media side, Sora 2 turned the original Sora video demo into a full video-and-audio model with better physics, synchronized sound and dialogue, and more control over style and shot structure, plus a dedicated Sora app with a full fledged social networking component, allowing any user to create their own TV network in their pocket.

Finally — and maybe most symbolically — OpenAI released gpt-oss-120B and gpt-oss-20B, open-weight MoE reasoning models under an Apache 2.0–style license. Whatever you think of their quality (and early open-source users have been loud about their complaints), this is the first time since GPT-2 that OpenAI has put serious weights into the public commons.

2. China’s open-source wave goes mainstream

If 2023–24 was about Llama and Mistral, 2025 belongs to China’s open-weight ecosystem.

A study from MIT and Hugging Face found that China now slightly leads the U.S. in global open-model downloads, largely thanks to DeepSeek and Alibaba’s Qwen family.

Highlights:

-

DeepSeek-R1 dropped in January as an open-source reasoning model rivaling OpenAI’s o1, with MIT-licensed weights and a family of distilled smaller models. VentureBeat has followed the story from its release to its cybersecurity impact to performance-tuned R1 variants.

-

Kimi K2 Thinking from Moonshot, a “thinking” open-source model that reasons step-by-step with tools, very much in the o1/R1 mold, and is positioned as the best open reasoning model so far in the world.

-

Z.ai shipped GLM-4.5 and GLM-4.5-Air as “agentic” models, open-sourcing base and hybrid reasoning variants on GitHub.

-

Baidu’s ERNIE 4.5 family arrived as a fully open-sourced, multimodal MoE suite under Apache 2.0, including a 0.3B dense model and visual “Thinking” variants focused on charts, STEM, and tool use.

-

Alibaba’s Qwen3 line — including Qwen3-Coder, large reasoning models, and the Qwen3-VL series released over the summer and fall months of 2025 — continues to set a high bar for open weights in coding, translation, and multimodal reasoning, leading me to declare this past summer as "

VentureBeat has been tracking these shifts, including Chinese math and reasoning models like Light-R1-32B and Weibo’s tiny VibeThinker-1.5B, which beat DeepSeek baselines on shoestring training budgets.

If you care about open ecosystems or on-premise options, this is the year China’s open-weight scene stopped being a curiosity and became a serious alternative.

3. Small and local models grow up

Another thing I’m thankful for: we’re finally getting good small models, not just toys.

Liquid AI spent 2025 pushing its Liquid Foundation Models (LFM2) and <a href…

Konten dipersingkat otomatis.

🔗 Sumber: venturebeat.com

🤖 Catatan MAROKO133

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!