📌 MAROKO133 Hot ai: Adobe Research Unlocking Long-Term Memory in Video World Model

Video world models, which predict future frames conditioned on actions, hold immense promise for artificial intelligence, enabling agents to plan and reason in dynamic environments. Recent advancements, particularly with video diffusion models, have shown impressive capabilities in generating realistic future sequences. However, a significant bottleneck remains: maintaining long-term memory. Current models struggle to remember events and states from far in the past due to the high computational cost associated with processing extended sequences using traditional attention layers. This limits their ability to perform complex tasks requiring sustained understanding of a scene.

A new paper, “Long-Context State-Space Video World Models” by researchers from Stanford University, Princeton University, and Adobe Research, proposes an innovative solution to this challenge. They introduce a novel architecture that leverages State-Space Models (SSMs) to extend temporal memory without sacrificing computational efficiency.

The core problem lies in the quadratic computational complexity of attention mechanisms with respect to sequence length. As the video context grows, the resources required for attention layers explode, making long-term memory impractical for real-world applications. This means that after a certain number of frames, the model effectively “forgets” earlier events, hindering its performance on tasks that demand long-range coherence or reasoning over extended periods.

The authors’ key insight is to leverage the inherent strengths of State-Space Models (SSMs) for causal sequence modeling. Unlike previous attempts that retrofitted SSMs for non-causal vision tasks, this work fully exploits their advantages in processing sequences efficiently.

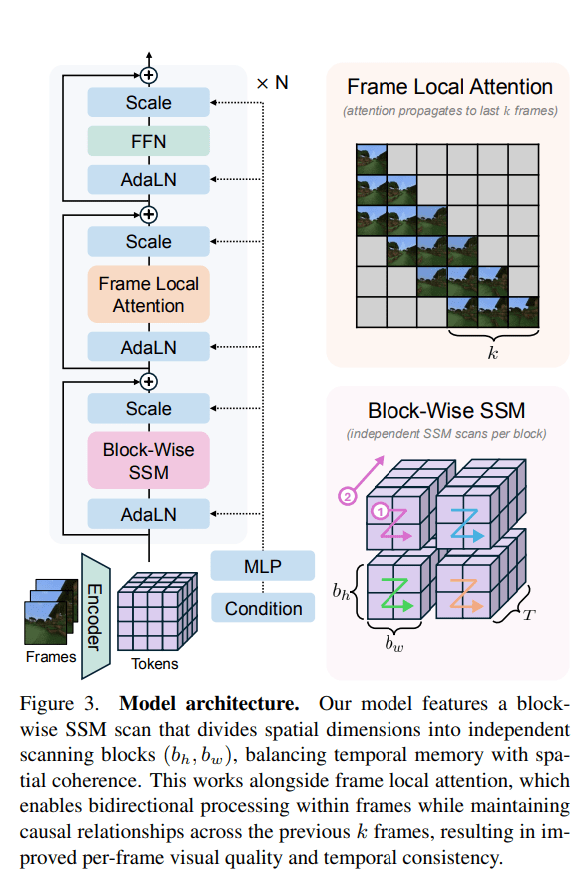

The proposed Long-Context State-Space Video World Model (LSSVWM) incorporates several crucial design choices:

- Block-wise SSM Scanning Scheme: This is central to their design. Instead of processing the entire video sequence with a single SSM scan, they employ a block-wise scheme. This strategically trades off some spatial consistency (within a block) for significantly extended temporal memory. By breaking down the long sequence into manageable blocks, they can maintain a compressed “state” that carries information across blocks, effectively extending the model’s memory horizon.

- Dense Local Attention: To compensate for the potential loss of spatial coherence introduced by the block-wise SSM scanning, the model incorporates dense local attention. This ensures that consecutive frames within and across blocks maintain strong relationships, preserving the fine-grained details and consistency necessary for realistic video generation. This dual approach of global (SSM) and local (attention) processing allows them to achieve both long-term memory and local fidelity.

The paper also introduces two key training strategies to further improve long-context performance:

- Diffusion Forcing: This technique encourages the model to generate frames conditioned on a prefix of the input, effectively forcing it to learn to maintain consistency over longer durations. By sometimes not sampling a prefix and keeping all tokens noised, the training becomes equivalent to diffusion forcing, which is highlighted as a special case of long-context training where the prefix length is zero. This pushes the model to generate coherent sequences even from minimal initial context.

- Frame Local Attention: For faster training and sampling, the authors implemented a “frame local attention” mechanism. This utilizes FlexAttention to achieve significant speedups compared to a fully causal mask. By grouping frames into chunks (e.g., chunks of 5 with a frame window size of 10), frames within a chunk maintain bidirectionality while also attending to frames in the previous chunk. This allows for an effective receptive field while optimizing computational load.

The researchers evaluated their LSSVWM on challenging datasets, including Memory Maze and Minecraft, which are specifically designed to test long-term memory capabilities through spatial retrieval and reasoning tasks.

The experiments demonstrate that their approach substantially surpasses baselines in preserving long-range memory. Qualitative results, as shown in supplementary figures (e.g., S1, S2, S3), illustrate that LSSVWM can generate more coherent and accurate sequences over extended periods compared to models relying solely on causal attention or even Mamba2 without frame local attention. For instance, on reasoning tasks for the maze dataset, their model maintains better consistency and accuracy over long horizons. Similarly, for retrieval tasks, LSSVWM shows improved ability to recall and utilize information from distant past frames. Crucially, these improvements are achieved while maintaining practical inference speeds, making the models suitable for interactive applications.

The Paper Long-Context State-Space Video World Models is on arXiv

The post Adobe Research Unlocking Long-Term Memory in Video World Models with State-Space Models first appeared on Synced.

🔗 Sumber: syncedreview.com

📌 MAROKO133 Breaking ai: Stratolaunch to raise launch cadence, acquire new aircraf

Startolaunch, whose Roc aircraft has the largest wingspan of any operational aircraft in the world, has completed a “significant capital raise” to accelerate and scale the development of its hypersonic test platform, the company announced in a statement.

The hypersonic technology firm said it has welcomed Elliott Investment Management L.P. as a new investor. The funding will “rapidly expand the company’s hypersonic production and flight capabilities,” the firm noted, adding that it is an important member of the US defense industry.

Importantly, it will also “pursue additional carrier aircraft”, allowing it to dramatically improve its current launch cadence.

Stratolaunch looks to boost test flight cadence

Stratolaunch was originally founded with the goal of launching satellites and other payloads to orbit via a spaceplane similar, in a similar vein to Virgin Orbit’s scrapped plans. The company pivoted to hypersonic testing in 2020 following the death of its founder, Paul Allen, and its acquisition by Cerberus Capital Management.

According to Stratolaunch’s statement, the company is responsible for designing and developing the “first and only commercial, autonomous, reusable hypersonic aircraft with multiple successful flights.” Stratolaunch has “comprehensive testing capabilities that are critical for advancing national security imperatives for the United States and allied nations,” the company added.

Last year, Stratolaunch successfully performed two reusable test flights of its Talon-A2 hypersonic test vehicle. At the time, the company said the tests took it a step closer to achieving its goal of launching operational hypersonic weapons tests.

Talon-A2 launches from the Roc at high altitudes. The hypersonic test vehicle is powered by a Hadley rocket engine developed by Ursa Major. The liquid oxygen-kerosene engine produces 5,000 lbs of thrust. This is enough to reach speeds exceeding Mach 5. That is five times the speed of sound, or 6,200 km/h (3,850 mph).

Now, the new capital will go towards increasing the production capacity of its hypersonic vehicles so as to increase the test flight cadence. The company also noted that it will pursue additional carrier aircraft. This will enable “more frequent and operationally relevant demonstrations for the Department of War and its partners.”

New carrier aircraft incoming

At the time of writing, public records show that Stratolaunch owns two carrier aircraft—the Roc and the Spirit of Mojave.

The Roc, designated Stratolaunch Model 351, has a wingspan measuring 385 feet (117 meters) across. The Spirit of Mojave, previously called ‘Cosmic Girl’, was acquired from Virgin Orbit after the firm filed for bankruptcy in 2023. It is a modified Boeing 747-400 that was originally built to launch rockets to space from high altitudes. It is now being adapted to support Talon-A hypersonic missions.

Now, Stratolaunch aims to add to its existing fleet to improve its launch cadence, allowing for rapid hypersonic capability testing. “At a time when speed, scale, capability, and execution matter more than ever, this investment enables Stratolaunch to move faster and think bigger,” said Zachary Krevor, president and CEO of Stratolaunch.

“We’ve already demonstrated hypersonic capability, and now it’s time to deliver at scale,” Krevor added. “We’re excited to welcome Elliott as a new partner alongside Cerberus, who has steadfastly supported our hypersonic mission.”

🔗 Sumber: interestingengineering.com

🤖 Catatan MAROKO133

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!