📌 MAROKO133 Breaking ai: James Webb observes a sunless world that produces surpris

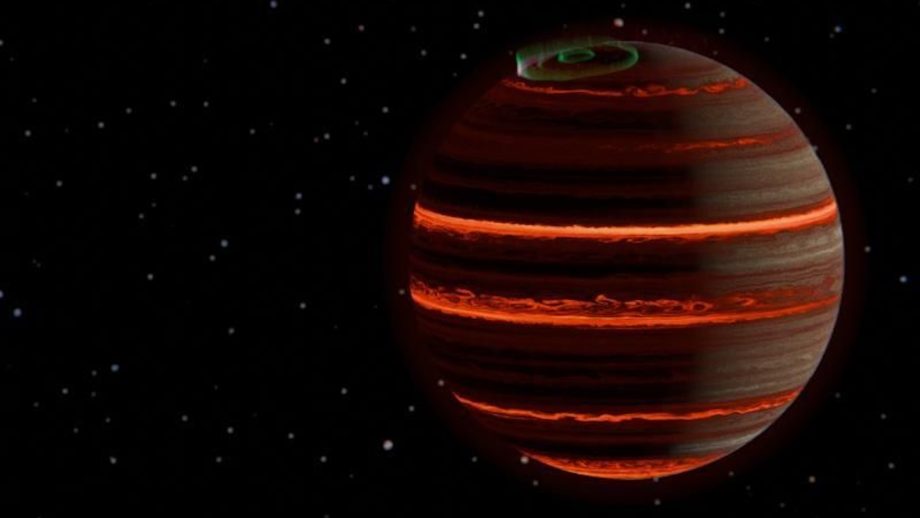

New James Webb Space Telescope observations have shed light on a distant world with no sun. Despite its nocturnal state, the alien sphere still glows with auroras brighter than the Earth’s northern lights.

The alien world, called SIMP-0136, is roughly 20 light-years away in the Pisces constellation. It is approximately 200 million years old and isn’t technically a planet. SIMP-0136 is a brown dwarf, a celestial body that blurs the line between gas giant planets and stars.

Analyzing a ‘failed star’

Astronomers don’t fully understand how brown dwarfs are formed. The objects, sometimes referred to as “failed stars”, might form similarly to planets, due to the accretion of material in a protoplanetary disk. They could also form like a star, thanks to the contraction of gas.

We do know that brown dwarfs never grow large enough to sustain a nuclear fusion reaction in their core. This means they cannot be classified as a star. However, they do host planets and emit measurable light, like a star. On the other hand, they also have auroras and atmospheres with storms, like planets.

The brown dwarf SIMP-0136 is even more unusual because it is a rogue world. This means it is floating freely through space, untethered from any star system.

A team of scientists pored over data collected by the James Webb Space Telescope to understand the alien world better. They detailed their findings in a new paper published in the journal Astronomy & Astrophysics.

The team tracked changes in SIMP-0136’s atmosphere as it performed a full rotation. The result is a “weather report” that provides new insight into the rogue world.

“These are some of the most precise measurements of the atmosphere of any extra-solar object to date, and the first time that changes in the atmospheric properties have been directly measured,” study lead author Evert Nasedkin of Trinity College Dublin explained in a press statement.

How a sunless world develops auroras

The study reveals shifts in temperature, cloud cover, and changes in chemistry as SIMP-0136 rotated.

“Understanding these weather processes will be crucial as we continue to discover and characterise exoworlds in the future,” explained study co-author Johanna Vos.

During the study, the team analyzed a layer of air roughly 570 degrees Fahrenheit (300 degrees Celsius) warmer than their models had predicted. Auroras most likely cause this extra heat.

On Earth, auroras are caused by the solar wind interacting with our planet’s magnetic field. The scientists behind the study believe that SIMP-0136’s auroras rely on charged particles flying through interstellar space instead of a host star.

SIMP-0136 has a much stronger magnetic field than that of Earth. This accentuates the interaction with these charged particles, leading to intense auroras. The charged particles interact so strongly with the brown dwarf’s magnetic fields that they heat the world’s upper atmosphere.

Due to their strange hybrid planet-star nature, brown dwarfs are the coldest known star category in the cosmos. Even so, the researchers recorded SIMP-0136’s temperature at a sweltering 2732 degrees Fahrenheit (1,500 degrees Celsius).

Rogue worlds like SIMP-0136 provide astronomers with a unique opportunity to study celestial bodies without the interference of stellar radiation from host stars. This allows for detailed studies of the world’s atmosphere and temperature. As with SIMP-0136, these studies can provide compelling insight into the weather and behavior of distant alien worlds.

🔗 Sumber: interestingengineering.com

📌 MAROKO133 Update ai: Which Agent Causes Task Failures and When?Researchers from

Share My Research is Synced’s column that welcomes scholars to share their own research breakthroughs with over 1.5M global AI enthusiasts. Beyond technological advances, Share My Research also calls for interesting stories behind the research and exciting research ideas. Contact us: [email protected]

Meet the authors

Institutions: Penn State University, Duke University, Google DeepMind, University of Washington, Meta, Nanyang Technological University, and Oregon State University. The co-first authors are Shaokun Zhang of Penn State University and Ming Yin of Duke University.

In recent years, LLM Multi-Agent systems have garnered widespread attention for their collaborative approach to solving complex problems. However, it’s a common scenario for these systems to fail at a task despite a flurry of activity. This leaves developers with a critical question: which agent, at what point, was responsible for the failure? Sifting through vast interaction logs to pinpoint the root cause feels like finding a needle in a haystack—a time-consuming and labor-intensive effort.

This is a familiar frustration for developers. In increasingly complex Multi-Agent systems, failures are not only common but also incredibly difficult to diagnose due to the autonomous nature of agent collaboration and long information chains. Without a way to quickly identify the source of a failure, system iteration and optimization grind to a halt.

To address this challenge, researchers from Penn State University and Duke University, in collaboration with institutions including Google DeepMind, have introduced the novel research problem of “Automated Failure Attribution.” They have constructed the first benchmark dataset for this task, Who&When, and have developed and evaluated several automated attribution methods. This work not only highlights the complexity of the task but also paves a new path toward enhancing the reliability of LLM Multi-Agent systems.

The paper has been accepted as a Spotlight presentation at the top-tier machine learning conference, ICML 2025, and the code and dataset are now fully open-source.

Paper:https://arxiv.org/pdf/2505.00212

Code:https://github.com/mingyin1/Agents_Failure_Attribution

Dataset:https://huggingface.co/datasets/Kevin355/Who_and_When

Research Background and Challenges

LLM-driven Multi-Agent systems have demonstrated immense potential across many domains. However, these systems are fragile; errors by a single agent, misunderstandings between agents, or mistakes in information transmission can lead to the failure of the entire task.

Currently, when a system fails, developers are often left with manual and inefficient methods for debugging:

Manual Log Archaeology : Developers must manually review lengthy interaction logs to find the source of the problem.

Reliance on Expertise : The debugging process is highly dependent on the developer’s deep understanding of the system and the task at hand.

This “needle in a haystack” approach to debugging is not only inefficient but also severely hinders rapid system iteration and the improvement of system reliability. There is an urgent need for an automated, systematic method to pinpoint the cause of failures, effectively bridging the gap between “evaluation results” and “system improvement.”

Core Contributions

This paper makes several groundbreaking contributions to address the challenges above:

1. Defining a New Problem: The paper is the first to formalize “automated failure attribution” as a specific research task. This task is defined by identifying the failure-responsible agent and the decisive error step that led to the task’s failure.

2. Constructing the First Benchmark Dataset: Who&When : This dataset includes a wide range of failure logs collected from 127 LLM Multi-Agent systems, which were either algorithmically generated or hand-crafted by experts to ensure realism and diversity. Each failure log is accompanied by fine-grained human annotations for:

Who: The agent responsible for the failure.

When: The specific interaction step where the decisive error occurred.

Why: A natural language explanation of the cause of the failure.

3. Exploring Initial “Automated Attribution” Methods : Using the Who&When dataset, the paper designs and assesses three distinct methods for automated failure attribution:

– All-at-Once: This method provides the LLM with the user query and the complete failure log, asking it to identify the responsible agent and the decisive error step in a single pass. While cost-effective, it may struggle to pinpoint precise errors in long contexts.

– Step-by-Step: This approach mimics manual debugging by having the LLM review the interaction log sequentially, making a judgment at each step until the error is found. It is more precise at locating the error step but incurs higher costs and risks accumulating errors.

– Binary Search: A compromise between the first two methods, this strategy repeatedly divides the log in half, using the LLM to determine which segment contains the error. It then recursively searches the identified segment, offering a balance of cost and performance.

Experimental Results and Key Findings

Experiments were conducted in two settings: one where the LLM knows the ground truth answer to the problem the Multi-Agent system is trying to solve (With Ground Truth) and one where it does not (Without Ground Truth). The primary model used was GPT-4o, though other models were also tested. The systematic evaluation of these methods on the Who&When dataset yielded several important insights:

– A Long Way to Go: Current methods are far from perfect. Even the best-performing single method achieved an accuracy of only about 53.5% in identifying the responsible agent and a mere 14.2% in pinpointing the exact error step. Some methods performed even worse than random guessing, underscoring the difficulty of the task.

– No “All-in-One” Solution: Different methods excel at different aspects of the problem. The All-at-Once method is better at identifying “Who,” while the Step-by-Step method is more effective at determining “When.” The Binary Search method provides a middle-ground performance.

– Hybrid Approaches Show Promise but at a High Cost: The researchers found that combining different methods, such as using the All-at-Once approach to identify a potential agent and then applying the Step-by-Step method to find the error, can improve overall performance. However, this comes with a significant increase in computational cost.

– State-of-the-Art Models Struggle: Surprisingly, even the most advanced reasoning models, like OpenAI o1 and DeepSeek R1, find this task challenging.- This h…

Konten dipersingkat otomatis.

🔗 Sumber: syncedreview.com

🤖 Catatan MAROKO133

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!