📌 MAROKO133 Breaking ai: Laser pulses reveal secret ‘rugby ball’ shape of Universe

Some of the heaviest atoms in the universe barely exist long enough to be studied, but scientists have now managed to map their inner structure before they vanish.

By firing carefully tuned laser pulses at atoms, a researcher from the University of Gothenburg has shown that the nuclei of neptunium and fermium (radioactive elements in the actinoid series of the periodic table) are not perfectly round but stretched out like rugby balls.

This might seem like a small detail, but nuclear shape influences how atoms behave, how they decay, and how new elements might form. For decades, these kinds of measurements were out of reach because such elements are produced in tiny amounts and decay within seconds.

“These elements are difficult to study because they are unstable and only exist in extremely small quantities at a time for a very short period of time, Mitzi Urquiza, the researcher who performed the laser pulse experiment as a part of her thesis work at the University of Gothenburg, said.

In her research work, Urquiza explained a practical way of studying such elements in detail. Her work opens a new window into the unstable edge of the periodic table, where the heaviest elements exist only briefly before breaking apart.

Catching atoms before they disappear

The biggest hurdle in studying heavy actinides like neptunium is their fleeting existence. These atoms are created in accelerators in extremely small numbers and often survive only for a few seconds.

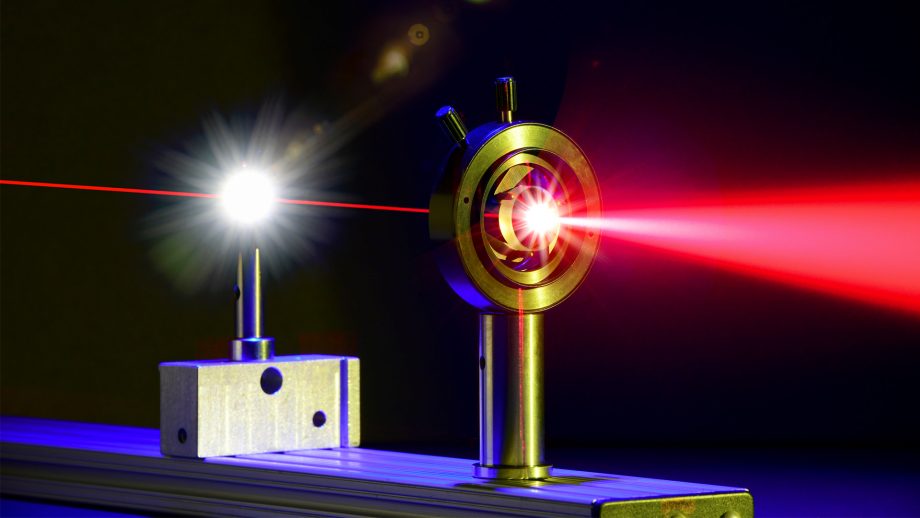

Traditional techniques require more stable samples and longer observation times, which simply do not exist for these elements. To overcome this, the researchers developed a specialized laser system built around an optical parametric oscillator (OPO).

This system can generate very precise wavelengths of light that conventional lasers struggle to produce, especially in the ultraviolet range, where many heavy elements respond best. More importantly, the setup combines a highly stable continuous-wave laser with pulsed amplification, allowing it to deliver both very precise and high-energy light.

Our “approach enables narrow-linewidth, high-energy pulses with optical linewidths on the order of 100 MHz, covering spectral gaps often inaccessible to conventional Titanium: Sapphire (Ti: Sa) and dye lasers,” the researchers note.

When these laser pulses are directed at the atoms, electrons inside them absorb specific amounts of energy and jump between energy levels.

“As the nucleus is not a point-like charge but possesses a finite volume and shape, these interactions can be observable through small shifts in the energy of an atomic transition known as the hyperfine structure,” the researchers added.

By measuring these tiny effects with high precision, scientists can extract information about the nucleus, including its size, magnetic and electric properties, and shape.

A high-quality description of elusive elements

What makes this approach powerful is the combination of precision and high power. The OPO-based laser produces narrow, high-energy pulses that can probe atoms within their short lifetimes while still resolving very fine details in their energy structure.

The experiments were carried out across several advanced facilities in Europe, each equipped with unique tools needed to produce, isolate, and measure these rare atoms.

By combining data from different setups, the researchers were able to build the first high-quality description of the nuclei of fermium and neptunium, revealing their elongated, rugby ball-like shape.

“These results demonstrate that OPO-based laser systems offer a versatile and efficient solution for extending high-resolution spectroscopy to new regions of the nuclear chart,” the researchers said.

Why nuclear shape matters beyond the lab

Understanding the shape of atomic nuclei is essential for testing and improving models of nuclear physics. These models are used to predict how elements behave, especially those that have not yet been discovered.

The new measurements provide valuable data that can refine these theories and help scientists explore how far the periodic table can extend. “Precise measurements of these observables are essential for testing state-of-the-art theoretical models and exploring the limits of nuclear existence,” the researchers claim.

There are also practical implications. Neptunium is part of the nuclear fuel cycle, so better knowledge of its properties could contribute to managing nuclear waste more effectively.

Moreover, in the longer term, insights from actinide research may also support the production of radioisotopes used in medical treatments such as cancer therapy.

The next step is to improve the laser technology further, expanding the range of wavelengths and increasing stability so that more exotic nuclei can be explored.

You can access the complete thesis from here.

🔗 Sumber: interestingengineering.com

📌 MAROKO133 Hot ai: MIT Researchers Unveil “SEAL”: A New Step Towards Self-Improvi

The concept of AI self-improvement has been a hot topic in recent research circles, with a flurry of papers emerging and prominent figures like OpenAI CEO Sam Altman weighing in on the future of self-evolving intelligent systems. Now, a new paper from MIT, titled “Self-Adapting Language Models,” introduces SEAL (Self-Adapting LLMs), a novel framework that allows large language models (LLMs) to update their own weights. This development is seen as another significant step towards the realization of truly self-evolving AI.

The research paper, published yesterday, has already ignited considerable discussion, including on Hacker News. SEAL proposes a method where an LLM can generate its own training data through “self-editing” and subsequently update its weights based on new inputs. Crucially, this self-editing process is learned via reinforcement learning, with the reward mechanism tied to the updated model’s downstream performance.

The timing of this paper is particularly notable given the recent surge in interest surrounding AI self-evolution. Earlier this month, several other research efforts garnered attention, including Sakana AI and the University of British Columbia’s “Darwin-Gödel Machine (DGM),” CMU’s “Self-Rewarding Training (SRT),” Shanghai Jiao Tong University’s “MM-UPT” framework for continuous self-improvement in multimodal large models, and the “UI-Genie” self-improvement framework from The Chinese University of Hong Kong in collaboration with vivo.

Adding to the buzz, OpenAI CEO Sam Altman recently shared his vision of a future with self-improving AI and robots in his blog post, “The Gentle Singularity.” He posited that while the initial millions of humanoid robots would need traditional manufacturing, they would then be able to “operate the entire supply chain to build more robots, which can in turn build more chip fabrication facilities, data centers, and so on.” This was quickly followed by a tweet from @VraserX, claiming an OpenAI insider revealed the company was already running recursively self-improving AI internally, a claim that sparked widespread debate about its veracity.

Regardless of the specifics of internal OpenAI developments, the MIT paper on SEAL provides concrete evidence of AI’s progression towards self-evolution.

Understanding SEAL: Self-Adapting Language Models

The core idea behind SEAL is to enable language models to improve themselves when encountering new data by generating their own synthetic data and optimizing their parameters through self-editing. The model’s training objective is to directly generate these self-edits (SEs) using data provided within the model’s context.

The generation of these self-edits is learned through reinforcement learning. The model is rewarded when the generated self-edits, once applied, lead to improved performance on the target task. Therefore, SEAL can be conceptualized as an algorithm with two nested loops: an outer reinforcement learning (RL) loop that optimizes the generation of self-edits, and an inner update loop that uses the generated self-edits to update the model via gradient descent.

This method can be viewed as an instance of meta-learning, where the focus is on how to generate effective self-edits in a meta-learning fashion.

A General Framework

SEAL operates on a single task instance (C,τ), where C is context information relevant to the task, and τ defines the downstream evaluation for assessing the model’s adaptation. For example, in a knowledge integration task, C might be a passage to be integrated into the model’s internal knowledge, and τ a set of questions about that passage.

Given C, the model generates a self-edit SE, which then updates its parameters through supervised fine-tuning: θ′←SFT(θ,SE). Reinforcement learning is used to optimize this self-edit generation: the model performs an action (generates SE), receives a reward r based on LMθ′’s performance on τ, and updates its policy to maximize the expected reward.

The researchers found that traditional online policy methods like GRPO and PPO led to unstable training. They ultimately opted for ReST^EM, a simpler, filtering-based behavioral cloning approach from a DeepMind paper. This method can be viewed as an Expectation-Maximization (EM) process, where the E-step samples candidate outputs from the current model policy, and the M-step reinforces only those samples that yield a positive reward through supervised fine-tuning.

The paper also notes that while the current implementation uses a single model to generate and learn from self-edits, these roles could be separated in a “teacher-student” setup.

Instantiating SEAL in Specific Domains

The MIT team instantiated SEAL in two specific domains: knowledge integration and few-shot learning.

- Knowledge Integration: The goal here is to effectively integrate information from articles into the model’s weights.

- Few-Shot Learning: This involves the model adapting to new tasks with very few examples.

Experimental Results

The experimental results for both few-shot learning and knowledge integration demonstrate the effectiveness of the SEAL framework.

In few-shot learning, using a Llama-3.2-1B-Instruct model, SEAL significantly improved adaptation success rates, achieving 72.5% compared to 20% for models using basic self-edits without RL training, and 0% without adaptation. While still below “Oracle TTT” (an idealized baseline), this indicates substantial progress.

For knowledge integration, using a larger Qwen2.5-7B model to integrate new facts from SQuAD articles, SEAL consistently outperformed baseline methods. Training with synthetically generated data from the base Qwen-2.5-7B model already showed notable improvements, and subsequent reinforcement learning further boosted performance. The accuracy also showed rapid improvement over external RL iterations, often surpassing setups using GPT-4.1 generated data within just two iterations.

Qualitative examples from the paper illustrate how reinforcement learning leads to the generation of more detailed self-edits, resulting in improved performance.

While promising, the researchers also acknowledge some limitations of the SEAL framework, including aspects related to catastrophic forgetting, computational overhead, and context-dependent evaluation. These are discussed in detail in the original paper.

Original Paper: https://arxiv.org/pdf/2506.10943

Project Site: https://jyopari.github.io/posts/seal

Github Repo: https://github.com/Continual-Intelligence/SEAL

The post MIT Researchers Unveil “SEAL”: A New Step Towards Self-Improving AI first appeared on Synced.

🔗 Sumber: syncedreview.com

🤖 Catatan MAROKO133

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!