📌 MAROKO133 Update ai: Workshop-built magnetic heater delivers hot water without g

In a small workshop setting, one builder has taken a familiar problem and approached it from a completely different angle. Instead of relying on fuel or electricity, he focused on motion itself as the source of heat.

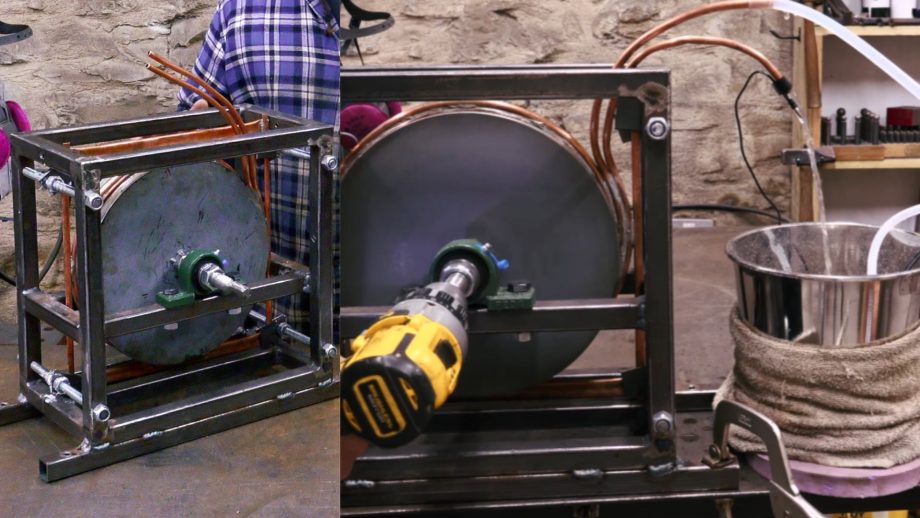

The result is a compact, mechanically driven water heater that produces heat using magnetic fields and copper tubing. Built by Greenhill Forge, the system draws from earlier generator work but shifts the goal from electricity to direct thermal output.

It is a simple idea in concept, but the execution leans heavily on careful design and precise construction.

Copper coil for heating

The core of the system sits between two spinning magnet rotors. Sandwiched between them is a flat disc made entirely from copper tubing. The tubing is wound into a tight spiral and soldered into one continuous conductor. Water flows through the inside of that tube during operation.

As the magnets rotate past the copper, they create eddy currents inside the metal. These currents generate heat within the tubing walls. Instead of wasting that heat, the system captures it immediately.

The moving water absorbs the heat directly as it passes through the coil. There is no conversion into electricity and no secondary heating element. The process stays direct and efficient.

Built around precision

The build process starts with a steel jig designed to hold everything in place. This ensures the copper coil forms evenly and maintains consistent spacing.

The tubing, about 8 mm thick, is wrapped carefully to create a uniform spiral. Clamps secure the structure, while soldering bonds the entire coil together.

After cleaning, the stator is mounted into a square frame. The magnet rotors sit on either side, held in alignment by bearings and lock nuts. Smooth rotation is essential. Even small deviations can reduce performance or create instability. Water movement is driven by a compact submersible pump. It pushes around 600 liters per hour while drawing just 10 watts.

Output scales with RPM

Testing used a corded drill to drive the system. At about 400 RPM, the heater processed 1.5 liters of water. The temperature rose from 46.2°F to 75.9°F in three minutes. Water exiting the system reached 83.1°F. That translates to roughly 575 watts of heat output.

Performance depends heavily on rotational speed. The relationship is not linear. Output increases with the square of RPM. Doubling the speed results in four times the heat. At 2,000 RPM, the system could reach close to 14.5 kW under ideal conditions.

During the test, the copper temperature stayed close to the water temperature. This showed strong transfer efficiency. The drill motor overheated before the heater itself showed stress.

The system works best when paired with direct mechanical input. A wind turbine or small hydropower setup can drive the rotors without conversion losses. Heat begins as soon as the system spins and stops when rotation ends. This makes it well-suited for variable energy sources.

It also avoids many common issues tied to heating systems. There is no fuel storage, no exhaust, and no traditional heating element to fail. For off-grid users, the design offers a practical alternative. It turns available motion into usable heat with minimal complexity and fewer points of failure.

🔗 Sumber: interestingengineering.com

📌 MAROKO133 Breaking ai: MIT Researchers Unveil “SEAL”: A New Step Towards Self-Im

The concept of AI self-improvement has been a hot topic in recent research circles, with a flurry of papers emerging and prominent figures like OpenAI CEO Sam Altman weighing in on the future of self-evolving intelligent systems. Now, a new paper from MIT, titled “Self-Adapting Language Models,” introduces SEAL (Self-Adapting LLMs), a novel framework that allows large language models (LLMs) to update their own weights. This development is seen as another significant step towards the realization of truly self-evolving AI.

The research paper, published yesterday, has already ignited considerable discussion, including on Hacker News. SEAL proposes a method where an LLM can generate its own training data through “self-editing” and subsequently update its weights based on new inputs. Crucially, this self-editing process is learned via reinforcement learning, with the reward mechanism tied to the updated model’s downstream performance.

The timing of this paper is particularly notable given the recent surge in interest surrounding AI self-evolution. Earlier this month, several other research efforts garnered attention, including Sakana AI and the University of British Columbia’s “Darwin-Gödel Machine (DGM),” CMU’s “Self-Rewarding Training (SRT),” Shanghai Jiao Tong University’s “MM-UPT” framework for continuous self-improvement in multimodal large models, and the “UI-Genie” self-improvement framework from The Chinese University of Hong Kong in collaboration with vivo.

Adding to the buzz, OpenAI CEO Sam Altman recently shared his vision of a future with self-improving AI and robots in his blog post, “The Gentle Singularity.” He posited that while the initial millions of humanoid robots would need traditional manufacturing, they would then be able to “operate the entire supply chain to build more robots, which can in turn build more chip fabrication facilities, data centers, and so on.” This was quickly followed by a tweet from @VraserX, claiming an OpenAI insider revealed the company was already running recursively self-improving AI internally, a claim that sparked widespread debate about its veracity.

Regardless of the specifics of internal OpenAI developments, the MIT paper on SEAL provides concrete evidence of AI’s progression towards self-evolution.

Understanding SEAL: Self-Adapting Language Models

The core idea behind SEAL is to enable language models to improve themselves when encountering new data by generating their own synthetic data and optimizing their parameters through self-editing. The model’s training objective is to directly generate these self-edits (SEs) using data provided within the model’s context.

The generation of these self-edits is learned through reinforcement learning. The model is rewarded when the generated self-edits, once applied, lead to improved performance on the target task. Therefore, SEAL can be conceptualized as an algorithm with two nested loops: an outer reinforcement learning (RL) loop that optimizes the generation of self-edits, and an inner update loop that uses the generated self-edits to update the model via gradient descent.

This method can be viewed as an instance of meta-learning, where the focus is on how to generate effective self-edits in a meta-learning fashion.

A General Framework

SEAL operates on a single task instance (C,τ), where C is context information relevant to the task, and τ defines the downstream evaluation for assessing the model’s adaptation. For example, in a knowledge integration task, C might be a passage to be integrated into the model’s internal knowledge, and τ a set of questions about that passage.

Given C, the model generates a self-edit SE, which then updates its parameters through supervised fine-tuning: θ′←SFT(θ,SE). Reinforcement learning is used to optimize this self-edit generation: the model performs an action (generates SE), receives a reward r based on LMθ′’s performance on τ, and updates its policy to maximize the expected reward.

The researchers found that traditional online policy methods like GRPO and PPO led to unstable training. They ultimately opted for ReST^EM, a simpler, filtering-based behavioral cloning approach from a DeepMind paper. This method can be viewed as an Expectation-Maximization (EM) process, where the E-step samples candidate outputs from the current model policy, and the M-step reinforces only those samples that yield a positive reward through supervised fine-tuning.

The paper also notes that while the current implementation uses a single model to generate and learn from self-edits, these roles could be separated in a “teacher-student” setup.

Instantiating SEAL in Specific Domains

The MIT team instantiated SEAL in two specific domains: knowledge integration and few-shot learning.

- Knowledge Integration: The goal here is to effectively integrate information from articles into the model’s weights.

- Few-Shot Learning: This involves the model adapting to new tasks with very few examples.

Experimental Results

The experimental results for both few-shot learning and knowledge integration demonstrate the effectiveness of the SEAL framework.

In few-shot learning, using a Llama-3.2-1B-Instruct model, SEAL significantly improved adaptation success rates, achieving 72.5% compared to 20% for models using basic self-edits without RL training, and 0% without adaptation. While still below “Oracle TTT” (an idealized baseline), this indicates substantial progress.

For knowledge integration, using a larger Qwen2.5-7B model to integrate new facts from SQuAD articles, SEAL consistently outperformed baseline methods. Training with synthetically generated data from the base Qwen-2.5-7B model already showed notable improvements, and subsequent reinforcement learning further boosted performance. The accuracy also showed rapid improvement over external RL iterations, often surpassing setups using GPT-4.1 generated data within just two iterations.

Qualitative examples from the paper illustrate how reinforcement learning leads to the generation of more detailed self-edits, resulting in improved performance.

While promising, the researchers also acknowledge some limitations of the SEAL framework, including aspects related to catastrophic forgetting, computational overhead, and context-dependent evaluation. These are discussed in detail in the original paper.

Original Paper: https://arxiv.org/pdf/2506.10943

Project Site: https://jyopari.github.io/posts/seal

Github Repo: https://github.com/Continual-Intelligence/SEAL

The post MIT Researchers Unveil “SEAL”: A New Step Towards Self-Improving AI first appeared on Synced.

🔗 Sumber: syncedreview.com

🤖 Catatan MAROKO133

Artikel ini adalah rangkuman otomatis dari beberapa sumber terpercaya. Kami pilih topik yang sedang tren agar kamu selalu update tanpa ketinggalan.

✅ Update berikutnya dalam 30 menit — tema random menanti!